Author: Gabriel Rüeck

Getting around Corporate VPN Restrictions

Executive Summary

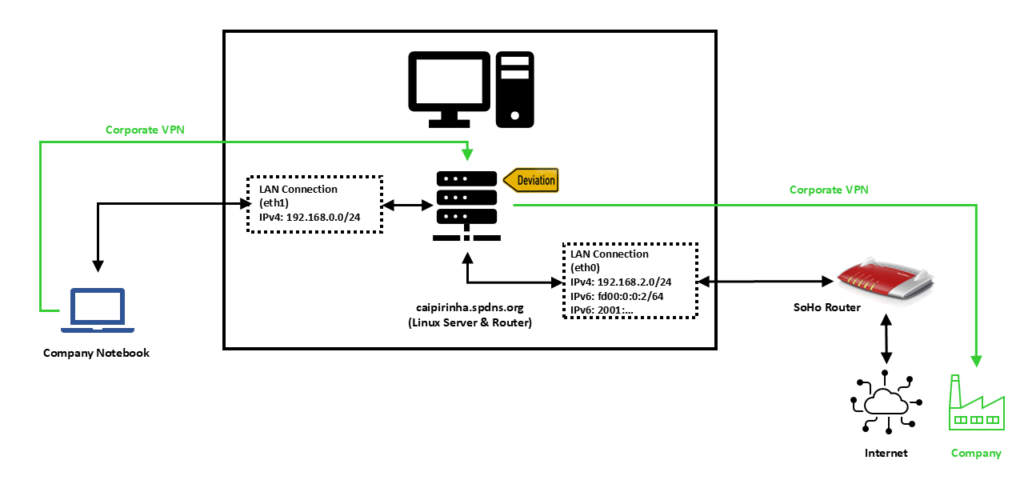

This blog post explains how Policy Routing on a Linux server in the Home Office can help you to bypass access restrictions by a corporate VPN to your local LAN.

Background

The need for this approach surged when I realized that while being in the corporate VPN with my company notebook, I could not access my home network anymore.

Preconditions

In order to use the approach described here, you should:

- … have access to a Linux machine which is already properly configured on its principal network interface (e.g., eth0) and which has an additional network card (e.g., eth1) available

- … have knowledge of routing concepts, networks, some understanding of shell scripts and configuration files

- … have already setup meaningful services like NTP, samba or MariaDB / MySQL on the Linux machine

- … know related system commands like sysctl

- … familiarize yourself with [1] and read at least a bit through [2]

Description and Usage

In this setup, we have a full-blown SoHo Linux server on an internal network 192.168.2.0/24 that is also used by all other devices in the same home. For the approach described here, this Linux server needs to be equipped with an additional network card (eth1), and we will use this connection exclusively in order to connect the company notebook. A DHCP and DNS server on the Linux server shall span the network 192.168.0.0/24 on the interface eth1, and the company notebook will get an IP address in this network. We assume that for remote work (Home Office), the user has to use a corporate VPN which is then channeled through our Linux server. For the approach described here, it is important that the corporate VPN on the company notebook does not channel all traffic of the company notebook through the VPN, but that it is a split VPN that leaves some routes outside of the VPN. Many corporate VPN are essentially split VPN and typically exclude IP ranges that connect to Microsoft® services (M365, Teams, SharePoint, etc.) or dedicated streaming services used by the company so that this traffic is not led through the company (it would anyway be fed into the company and directly be sent out to Microsoft® only using precious bandwidth of the company’s internet connection). We will single out one IP address of the IP ranges that are outside the corporate VPN and use the fact that legitimate traffic which might go to this IP address almost certainly will be either on port 80 (http) or on port 443 (https). An iptables command will help us to deviate traffic on this one IP address that shall go to dedicated services on our Linux server.

We need some auxiliary services in order to make things work perfectly, and they are described in the following sections.

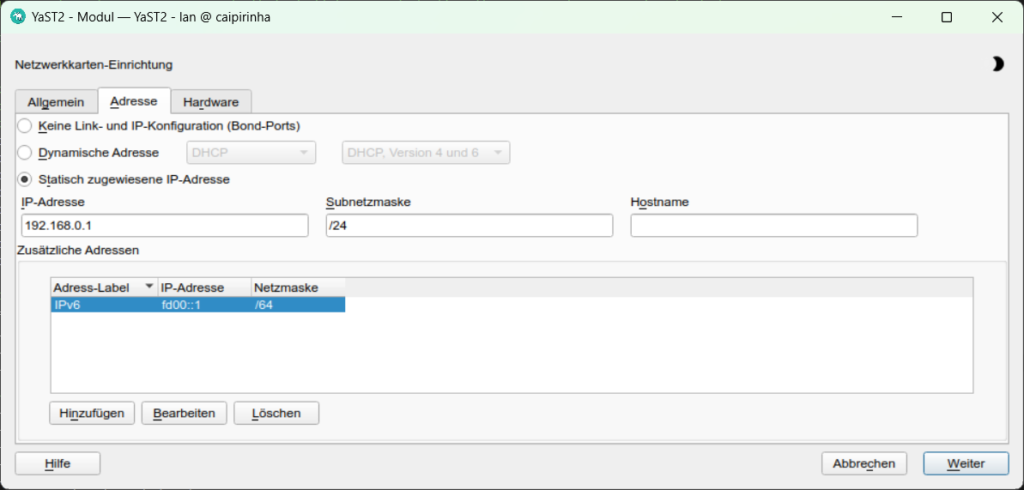

Setting up eth1

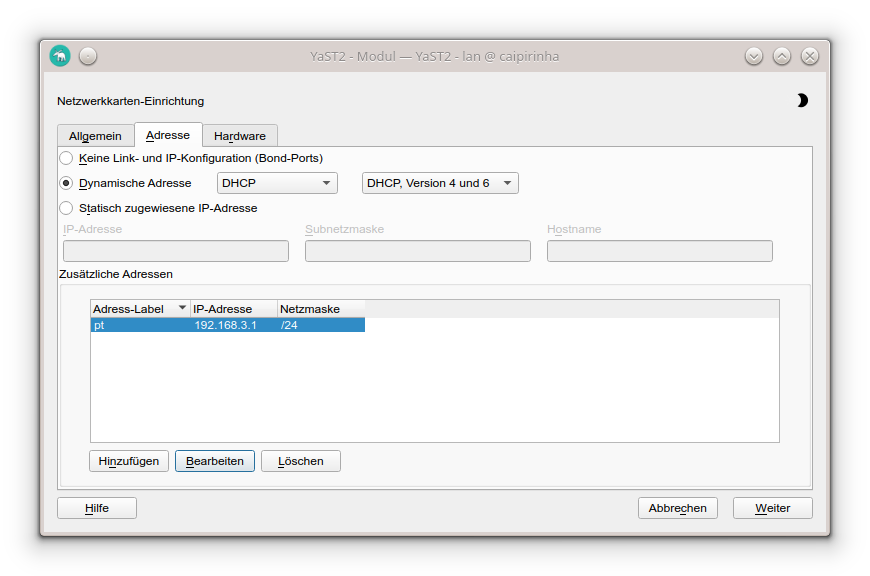

The first step is to set up the interface eth1 and to assign static IP addresses for IPv4 and IPv6. In order to make life easy for me, I use YaST2 on my openSuSE system and assign the addresses 192.168.0.1 and fd00::1 to the Linux server on eth1.

Providing DHCP and DNS on eth1

The company notebook needs to get an IP address when it is booted up, and since it is connected only to eth1 on the Linux server, this means that the Linux server shall provide an IP address via DHCP so that we do not have to configure a static IP on the company notebook. The package dnsmasq can provide both DHCP as well as cache DNS. That is very practical as it allows us for example, to have only DNS on eth0 where the SoHo router already is the DHCP master, but to configure both DHCP and a caching DNS on eth1. The following configuration file will exactly do that (it uses only a subset of the capabilities of dnsmasq):

/etc/dnsmasq.conf

# Never forward addresses in the non-routed address spaces.

bogus-priv

# If you don't want dnsmasq to read /etc/resolv.conf or any other

# file, getting its servers from this file instead (see below), then

# uncomment this.

no-resolv

# If you don't want dnsmasq to poll /etc/resolv.conf or other resolv

# files for changes and re-read them then uncomment this.

no-poll

# Add other name servers here, with domain specs if they are for

# non-public domains.

server=8.8.8.8

server=8.8.4.4

server=9.9.9.9

server=1.1.1.1

# If you want dnsmasq to listen for DHCP and DNS requests only on

# specified interfaces (and the loopback) give the name of the

# interface (eg eth0) here.

# Repeat the line for more than one interface.

interface=eth0

interface=eth1

# If you want dnsmasq to provide only DNS service on an interface,

# configure it as shown above, and then use the following line to

# disable DHCP and TFTP on it.

no-dhcp-interface=eth0

# Uncomment this to enable the integrated DHCP server, you need

# to supply the range of addresses available for lease and optionally

# a lease time. If you have more than one network, you will need to

# repeat this for each network on which you want to supply DHCP

# service.

dhcp-range=tag:eth1,192.168.0.10,192.168.0.254,24h

# Enable DHCPv6. Note that the prefix-length does not need to be specified

# and defaults to 64 if missing/

dhcp-range=tag:eth1,fd00:0:0:0::A, fd00:0:0:0::C8, 64, 24h

# Assign a pseudo-static IPv4 to the the company notebook identified by its MAC.

# Assign a pseudo-static IPv6 to the the company notebook identified by its DUID.

# Note the MAC addresses CANNOT be used to identify DHCPv6 clients.

dhcp-host=80:3f:5d:d2:4b:57,FHD4QV3,192.168.0.195,24h

dhcp-host=id:00:01:00:01:2c:e6:bc:51:ac:91:a1:61:03:30,FHD4QV3,[fd00::c3/64]

# Set the NTP time server addresses

dhcp-option=option:ntp-server,192.168.2.3

# Send Microsoft-specific option to tell windows to release the DHCP lease

# when it shuts down. Note the "i" flag, to tell dnsmasq to send the

# value as a four-byte integer - that's what Microsoft wants. See

# https://learn.microsoft.com/en-us/openspecs/windows_protocols/ms-dhcpe/4cde5ceb-4fc1-4f9a-82e9-13f6b38d930c

dhcp-option=vendor:MSFT,2,1i

# Include all files in a directory which end in .conf

conf-dir=/etc/dnsmasq.d/,*.confIn this configuration, we can see that on eth0, we will not enable DHCP (Option no-dhcp-interface=eth0). On eth1, we want DHCP to be active. Furthermore, we propagate the server’s address 192.168.2.3 as NTP server. For this, the NTP service needs to be enabled, of course, otherwise that would be pointless.

With the configuration option dhcp-host, we can assign a pseudo-static IPv4 address (192.168.0.195) to the company notebook identified by its MAC address. And using the same option for a second time, we can also assign a pseudo-static IPv6 address to the company notebook. However, in order to accomplish this, we need to know the DHCP Unique Identifier (DUID) of the company notebook. With dnsmasq, we can obtain the DUID by leaving out the option dhcp-host at first and then scanning in the log file of dnsmasq (or, in the syslog if no dedicated log file has been specified) which DUID the notebook has. In the log file, we might find entries like:

2026-02-27T09:51:39.478262+01:00 caipirinha dnsmasq-dhcp[14776]: DHCPSOLICIT(eth1) 00:01:00:01:2c:e6:bc:51:ac:91:a1:61:03:30

2026-02-27T09:51:39.478460+01:00 caipirinha dnsmasq-dhcp[14776]: DHCPADVERTISE(eth1) fd00::c3 00:01:00:01:2c:e6:bc:51:ac:91:a1:61:03:30 fhd4qv3The DUID can then be identified as 00:01:00:01:2c:e6:bc:51:ac:91:a1:61:03:30.

dnsmasq uses the file /etc/hosts as well as upstream DNS servers for its own DNS service. The advantage of this is that – if your file /etc/hosts is properly maintained – you can also use the device names listed there. As upstream DNS servers from which dnsmasq itself gets the IP resolution, I have configured four popular ones (8.8.8.8, 8.8.4.4, 9.9.9.9, 1.1.1.1), but you could also just list the IP of your SoHo router or of the DNS resolver of your internet provider.

Providing web proxy services

If we want to use unrestricted and unfiltered internet also on the company notebook, then we need to set up a web proxy on our Linux server and use a separate browser on the company notebook on which we configure the Linux server as web proxy. As on company notebooks, you might not be allowed to install software by yourself, Mozilla Firefox, Portable Edition might be an option. This is a browser that does not require installation but can just be placed on the hard disk of the company notebook. In this browser, you can configure a dedicated proxy server without having to change the system configuration or default proxy setting of the company notebook. On the Linux server, the package tinyproxy is an easy-to-configure and lightweight proxy server well suited for our purpose. Below is a typical configuration of tinyproxy. The configuration option Port sets the port on which tinyproxy will listed for incoming connections, in our case I chose 4077.

/etc/tinyproxy.conf

# User/Group: This allows you to set the user and group that will be

# used for tinyproxy after the initial binding to the port has been done

# as the root user. Either the user or group name or the UID or GID

# number may be used.

#

User tinyproxy

Group tinyproxy

# Port: Specify the port which tinyproxy will listen on. Please note

# that should you choose to run on a port lower than 1024 you will need

# to start tinyproxy using root.

#

Port 4077

# Bind: This allows you to specify which interface will be used for

# outgoing connections. This is useful for multi-home'd machines where

# you want all traffic to appear outgoing from one particular interface.

#

Bind 192.168.2.3

# Timeout: The maximum number of seconds of inactivity a connection is

# allowed to have before it is closed by tinyproxy.

#

Timeout 600

# LogFile

#

LogFile "/var/log/tinyproxy/tinyproxy.log"

# LogLevel: Warning

#

# Set the logging level. Allowed settings are:

# Critical (least verbose)

# Error

# Warning

# Notice

# Connect (to log connections without Info's noise)

# Info (most verbose)

#

LogLevel Warning

# PidFile

#

PidFile "/var/run/tinyproxy/tinyproxy.pid"

# XTinyproxy: Tell Tinyproxy to include the X-Tinyproxy header, which

# contains the client's IP address.

#

XTinyproxy Yes

# MaxClients: This is the absolute highest number of threads which will

# be created. In other words, only MaxClients number of clients can be

# connected at the same time.

#

MaxClients 400

# Allow: Customization of authorization controls. If there are any

# access control keywords then the default action is to DENY. Otherwise,

# the default action is ALLOW.

#

Allow 127.0.0.1

Allow ::1

Allow 192.168.0.0/16

# ViaProxyName: The "Via" header is required by the HTTP RFC, but using

# the real host name is a security concern. If the following directive

# is enabled, the string supplied will be used as the host name in the

# Via header; otherwise, the server's host name will be used.

#

ViaProxyName "tinyproxy"

# Filter: This allows you to specify the location of the filter file.

#

Filter "/etc/tinyproxy/filter"

# FilterURLs: Filter based on URLs rather than domains.

#

FilterURLs On

# FilterDefaultDeny: Change the default policy of the filtering system.

# If this directive is commented out, or is set to "No" then the default

# policy is to allow everything which is not specifically denied by the

# filter file.

#

# However, by setting this directive to "Yes" the default policy becomes

# to deny everything which is _not_ specifically allowed by the filter

# file.

#

FilterDefaultDeny Notinyproxy also allows filtering of internet domains. I know I said before that we want unrestricted and unfiltered internet access, but in this case, we can use the file /etc/tinyproxy/filter in order to filter out nasty and annoying advertisement and tracking domains. Suitable filter lists can be found on the internet and can just be copied to /etc/tinyproxy/filter. Or you might add just these domains whose advertisements annoy you most when you access web pages. I personally use a mixture of both.

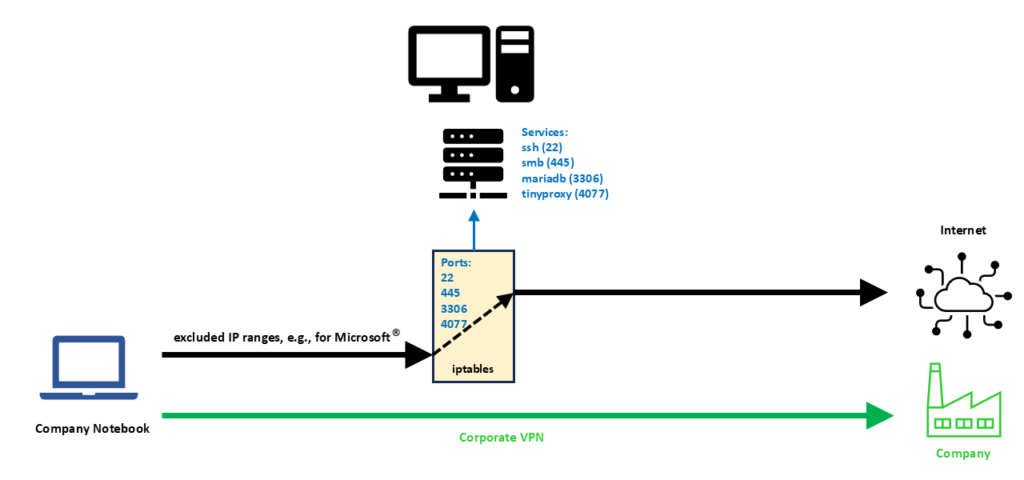

Re-routing traffic to our server

In my personal case, the corporate VPN client (a Cisco VPN client) is so helpful that it provides me with the IP ranges that are excluded from the corporate VPN. Out of these IP ranges, I did pick one IP address, in my case, 192.229.232.200. The selection was completely arbitrary; I could have chosen any other IP address from the IP ranges that are excluded from the corporate VPN. The following commands prepare the Linux server for our desired setup:

ip link set eth1 up

iptables -t nat -A POSTROUTING -s 192.168.0.0/24 -o eth0 -j SNAT --to-source 192.168.0.1

ip6tables -t nat -A POSTROUTING -s fd00:0:0:0::/64 -o eth0 -j MASQUERADE

iptables -t nat -A PREROUTING -i eth1 -p tcp -d 192.229.232.200 --match multiport --dports 22,445,3306,4077 -j DNAT --to 192.168.2.3

systemctl start dnsmasq.service

systemctl start tinyproxy.serviceLet us discuss these commands in detail:

- The first command brings up the network interface eth1. This command might not be necessary if you have a switch connected to eth1 of the Linux Server or if the company notebook is powered up before you boot up the Linux server. Otherwise, if you boot up the Linux server and nothing is connected to eth1, the interface might not come up.

- The second command translates traffic from the network on eth1 to the SoHo network 192.168.2.0/24 and to the Linux server’s address on that network (192.168.2.3). Of course, IPv4 routing needs to be enabled on the Linux server. This command enables that (even without the corporate VPN active), the company notebook can get access to the internet from its otherwise isolated network 192.168.0.0/24.

- The third command does the same for the IPv6 domain and the network fd00:0:0:0::/64 on eth1. Probably we would not even need IPv6 on the network of the company notebook, few companies already work with IPv6. If we leave IPv6 away, we should however also delete the configuration option dhcp-host for IPv6 in /etc/dnsmasq.conf.

- The fourth command is very important. It tells the server to deviate connections on one of the TCP ports 22, 445, 3306, 4077 originally destined to the IP address 192.229.232.200 to the new IP address 192.168.0.1, the IP address of the Linux server on eth1.

- The fifth and sixth command start the services dnsmasq and tinyproxy.

We can see from the fourth command that the scope for deviating connections to the Linux server is very narrow. First, we only consider TCP connections, and we single out only four IP ports that probably otherwise would not be used in conjunction with the IP address 192.229.232.200. With this, we can access the following services on our Linux server:

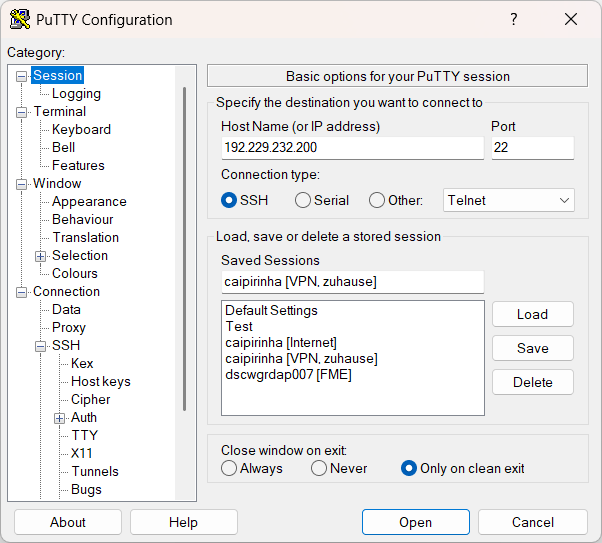

- ssh (Port 22): On the company notebook, we have to configure our ssh client (e.g., puTTY) for a connection to 192.229.232.200:22.

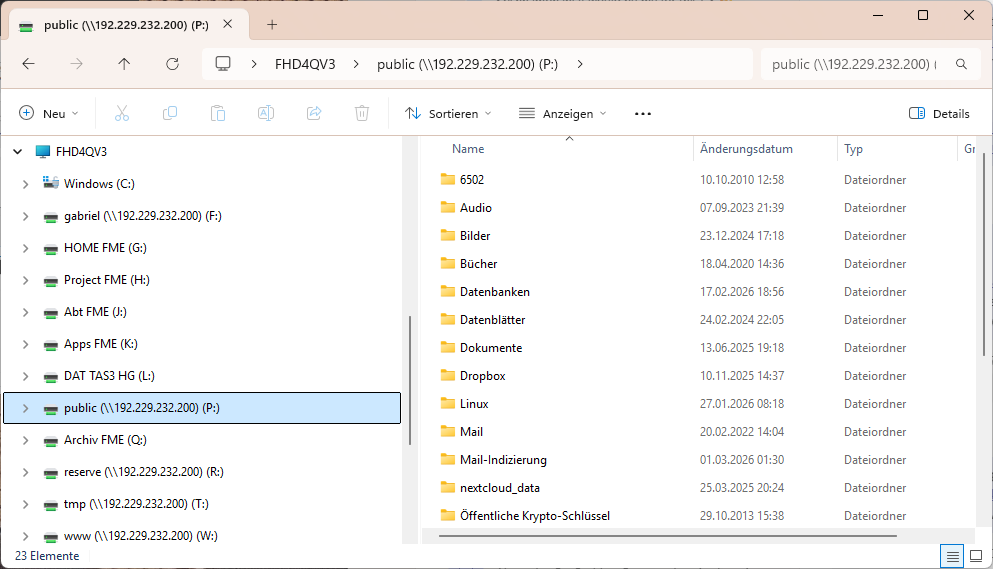

- smb (Port 445): Of course, the Linux server must have a smb service running already; the configuration of it is not part of this article. Then, on the company notebook, we can access a network drive by using \\192.229.232.200\network_share.

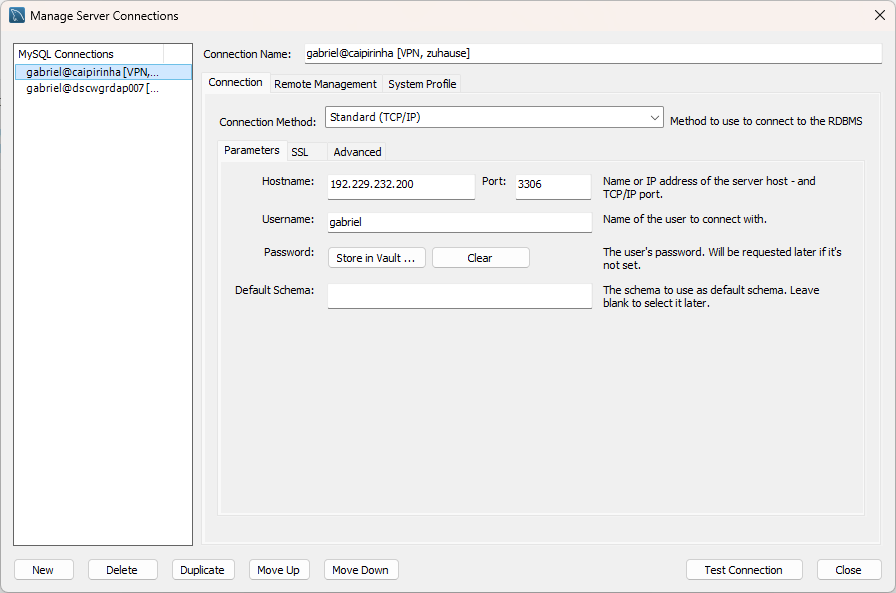

- mariadb / mysql (Port 3306): Of course, the Linux server must have a mysql service running already; the configuration of it is not part of this article. Then, on the company notebook, we can access the service for example with the MySQL Workbench by connecting to 192.229.232.200:3306.

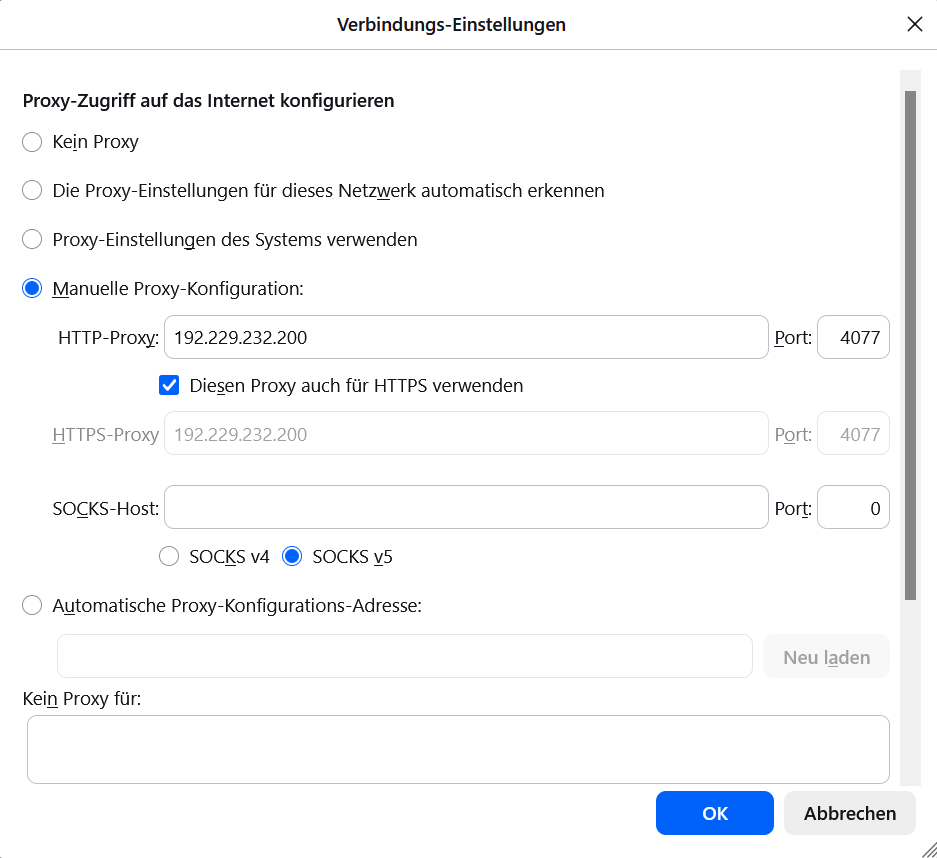

- tinyproxy (Port 4077): We configure Mozilla Firefox, Portable Edition and set the proxy to 192.229.232.200, Port 4077 for both http and https.

The following images show the configuration of related programs and apps on the company notebook.

Of course, you can modify the iptables command (fourth command above) to deviate even more ports, depending on the services that you have available on your own Linux server.

Conclusion

With a second LAN, DHCP, DNS, a proxy server like tinyproxy, some clever commands and a split corporate VPN, we can bypass corporate VPN restrictions that would not allow us to access our local network and services on our Linux server otherwise. With an additional browser on the company notebook like Mozilla Firefox, Portable Edition, this will even enable us to bypass restrictions and browsing policies that corporations might have put forward.

Having said that, I would always recommend you stick to the IT regulations of your company, of course…

Sources

Getting around TV App Geo-Blocking

Executive Summary

This blog post explains how Policy Routing on a Linux server together with commercial VPNs to other countries can help you to put your client devices (TV, smartphones) logically into the internet of other countries in order to get around geo-blocking.

Background

The idea or merely, the need for this approach, surged when I installed an app of a Portuguese TV provider and could not even watch the news journal due to geo-blocking. Additionally, I wanted to have a comfortable solution with which I can switch the TV to different countries while I am sitting in my TV chair with my smartphone at hand 😁.

Preconditions

In order to use the approach described here, you should:

- … have access to a Linux machine which is already properly configured on its principal network interface (e.g., eth0)

- … have the package openvpn installed on the Linux machine (preferably from a repository of your Linux distribution)

- … have access to a commercial VPN provider allowing you to run several parallel client connections on the same machine

- … have knowledge of routing concepts, networks, some understanding of shell scripts and configuration files

- … know related system commands like sysctl

- … familiarize yourself with [1], [3], [4], [5]

Description and Usage

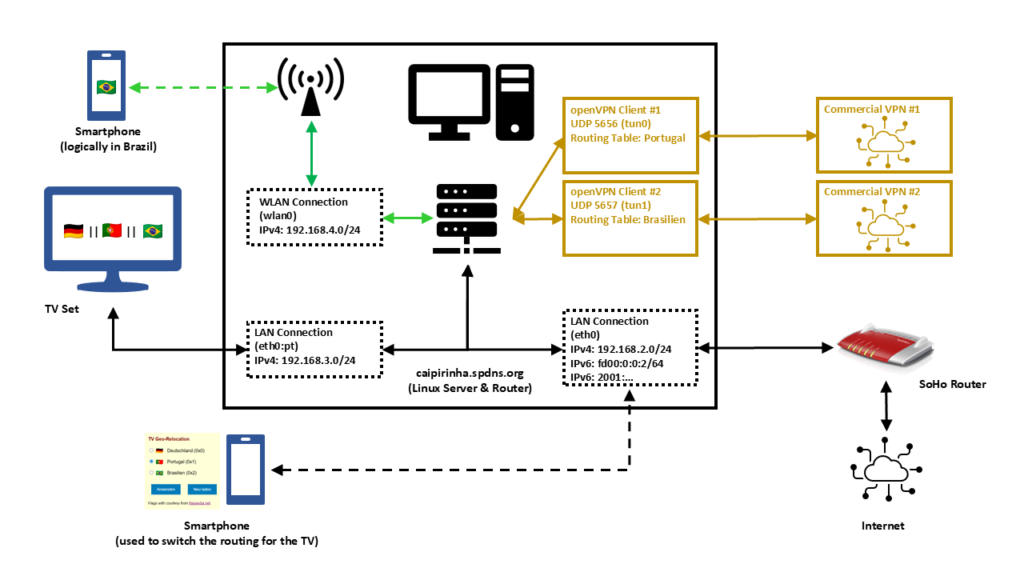

In this setup, we have a full-blown SoHo Linux server on an internal network 192.168.2.0/24 that is also used by all other devices in the same home. Subsequently, we will connect this Linux server via a commercial VPN to two endpoints, one endpoint in Portugal and one endpoint in Brazil. We will also create two additional networks for our SoHo environment:

- 192.168.4.0/24 will be spread via WLAN (WiFi) and will constantly logically be “in Brazil”. This network can simply be selected by a smartphone at home, and the smartphone will have a Brazilian internet connection while still being able to access all resources in the home network.

- 192.168.3.0/24 will an overlay on our wired SoHo network. The TV set will be the only client in this network. We will make the endpoint of this network selectable, that is, one shall be able to select whether this network is in Germany, in Portugal, or in Brazil.

That setup is suited to my personal preferences, but of course, after having read through this article, you will know sufficiently to suit the setup to your preferences and demands.

OpenVPN Client Configuration

For the setup described below, we need two client VPN connections, to Portugal and to Brazil. As I do not have infrastructure outside of Germany, I use a commercial VPN provider, in my case this is Private Internet Access®. However, there are several commercial VPNs that you can also use; the important thing is that they allow several active connections from one device and that you can configure and adapt the VPN configuration file, preferably for an openvpn connection (as this will also be described here). The client configuration files listed here use UDP, a split-tunnel setup and also contain all the necessary certificates in one file. The login credentials are stored in another file named /etc/openvpn/pia.login. The certificates of the configuration files have been omitted here for readability reasons. An important configuration command is route-nopull as it inhibits that we pull (default) routes from the commercial VPN server. After all, we want to specify ourselves which IP packets shall use which outgoing network.

UDP-based split VPN to Portugal

# Konfigurationsdatei für den openVPN-Client auf CAIPIRINHA zur Verbindung nach Portugal mit PIA

auth-user-pass /etc/openvpn/pia.login

auth-nocache

auth-retry nointeract

auth sha1

client

compress

dev tun0

disable-occ

log /var/log/openvpn_PT.log

lport 5457

mute 20

proto udp

persist-key

persist-tun

remote pt.privacy.network 1198

remote-cert-tls server

reneg-sec 0

resolv-retry infinite

route-nopull

script-security 2

status /var/run/openvpn/status_PT

tls-client

up /etc/openvpn/start_piavpn.sh

down /etc/openvpn/stop_piavpn.sh

verb 3

<crl-verify>

-----BEGIN X509 CRL-----

...

-----END X509 CRL-----

</crl-verify>

<ca>

-----BEGIN CERTIFICATE-----

...

-----END CERTIFICATE-----

</ca>UDP-based split VPN to Brazil

# Konfigurationsdatei für den openVPN-Client auf CAIPIRINHA zur Verbindung nach Brasilien mit PIA

auth-user-pass /etc/openvpn/pia.login

auth-nocache

auth-retry nointeract

auth sha1

client

compress

dev tun1

disable-occ

log /var/log/openvpn_BR.log

lport 5458

mute 20

proto udp

persist-key

persist-tun

remote br.privacy.network 1198

remote-cert-tls server

reneg-sec 0

resolv-retry infinite

route-nopull

script-security 2

status /var/run/openvpn/status_BR

tls-client

up /etc/openvpn/start_piavpn.sh

down /etc/openvpn/stop_piavpn.sh

verb 3

<crl-verify>

-----BEGIN X509 CRL-----

...

-----END X509 CRL-----

</crl-verify>

<ca>

-----BEGIN CERTIFICATE-----

...

-----END CERTIFICATE-----

</ca>Both configuration files call upon scripts (/etc/openvpn/start_piavpn.sh and /etc/openvpn/stop_piavpn.sh) which are executed upon start and upon termination of the VPN. start_piavpn.sh (which needs the tool ipcalc to be installed on the server) populates the routing table Portugal or Brasilien, depending on which client configuration has called the script. It furthermore blocks incoming new connections from the commercial VPNs for security reasons. Normally, you should not experience incoming connections on your commercial VPN (unless this has been wanted and ordered by you), however, I have seen different behavior in the past. Finally, the script start_piavpn.sh sets the correct default route in the corresponding routing table. The script stop_piavpn.sh deletes the blocking of incoming requests. There is no need to delete the previously active default routes from the routing tables Portugal or Brasilien as they will vanish anyway with the termination of the VPN connection. All other configuration options have been discussed in detail already in [1], [2].

start_piavpn.sh

#!/bin/bash

#

# This script sets the VPN parameters in the routing tables "main", "Portugal", and "Brasilien" once the connection has been successfully established.

# This script requires the tool "ipcalc" which needs to be installed on the target system.

# Set the correct PATH environment

PATH='/sbin:/usr/sbin:/bin:/usr/bin'

VPN_DEV=$1

VPN_SRC=$4

VPN_MSK=$5

VPN_GW=$(ipcalc ${VPN_SRC}/${VPN_MSK} | sed -n 's/^HostMin:\s*\([0-9]\{1,3\}\.[0-9]\{1,3\}\.[0-9]\{1,3\}\.[0-9]\{1,3\}\).*/\1/p')

VPN_NET=$(ipcalc ${VPN_SRC}/${VPN_MSK} | sed -n 's/^Network:\s*\([0-9]\{1,3\}\.[0-9]\{1,3\}\.[0-9]\{1,3\}\.[0-9]\{1,3\}\/[0-9]\{1,2\}\).*/\1/p')

case "${VPN_DEV}" in

"tun0") ROUTING_TABLE='Portugal';;

"tun1") ROUTING_TABLE='Brasilien';;

esac

iptables -t filter -A INPUT -i ${VPN_DEV} -m state --state NEW,INVALID -j DROP

iptables -t filter -A FORWARD -i ${VPN_DEV} -m state --state NEW,INVALID -j DROP

ip route add ${VPN_NET} dev ${VPN_DEV} proto static scope link src ${VPN_SRC} table ${ROUTING_TABLE}

ip route replace default dev ${VPN_DEV} via ${VPN_GW} table ${ROUTING_TABLE}stop_piavpn.sh

#!/bin/bash

#

# This script removes some routing table entries when the connection is terminated.

# Set the correct PATH environment

PATH='/sbin:/usr/sbin:/bin:/usr/bin'

VPN_DEV=$1

VPN_SRC=$4

VPN_MSK=$5

iptables -t filter -D INPUT -i ${VPN_DEV} -m state --state NEW,INVALID -j DROP

iptables -t filter -D FORWARD -i ${VPN_DEV} -m state --state NEW,INVALID -j DROPRouting Tables

In order to use Policy Routing, we set up routing tables as described in [1], and we describe these routing tables in /etc/iproute2/rt_tables:

#

# reserved values

#

255 local

254 main

253 default

0 unspec

#

# local

#

240 Portugal

241 BrasilienThe idea here is to direct all IP traffic that shall go to Portugal to the routing table Portugal, and to direct all IP traffic that shall go to Brazil to the routing table Brasilien. The routing table main will be used for all other traffic; it is part of the default configuration of /etc/iproute2/rt_tables.

Local LAN for the TV set

The network that so far has been used on my Linux server has been 192.168.2.0/24, and the corresponding server interface has been eth0. We now need to add one more network to this interface. In order to make that addition permanent and my life easy, I did that via the graphical YaST2 interface.

In my case, I chose the address label “pt” (because the original idea was to use this network exclusively for the traffic to Portugal); however, you can choose any label that you wish. While the Linux server usually receives a pseudo-static IP address (192.168.2.3) in the SoHo network 192.168.2.0/24 by the SoHo router (a FRITZ!Box), in our new network 192.168.3.0/24, the server gets the static IP address (192.168.3.1). Clients in this network will consequently require a static IP address configuration; we cannot use DHCP as this network runs on the same physical network infrastructure as the SoHo network 192.168.2.0/24 which already has the FRITZ!Box as DHCP master. In my case, I therefore have configured the TV set (the only client in the network 192.168.3.0/24) with the setup:

- IP address: 192.168.3.186

- Netmask: 255.255.255.0

- Gateway: 192.168.3.1

- DNS server: 192.168.3.1

As DNS I have used the server itself as I have a DNS relay running on the Linux server. If that was not the case, I could also have used 192.168.2.1 which is the address of the FRITZ!Box.

Local WLAN (WiFi) for wireless devices

For the WLAN (WiFi) network I have equipped the Linux server with a PCI Express WLAN card (in my case an old Asus PCE-N10, but I would recommend you a newer one in the 5 GHz band) and attached an external antenna to it. This WiFi card shall act as access point (master). I did not succeed to make that work with YaST2 in conjunction with WPA encryption, and subsequent to my failure, I consulted an Artificial Intelligence (AI) that recommended me to use the package hostapd which needs to be installed on the Linux server. I did so, and after some research and experiments, I came up with a suitable configuration:

/etc/hostapd.conf

# Basis-Einstellungen

interface=wlan0

driver=nl80211

ssid=Querstrasse 8 [BR]

hw_mode=g

channel=1 # 1-13, vermeiden Sie DFS-Kanäle (52+)

ieee80211n=0 # Optional für bessere Phones, aber ungünstig bei schlechter Verbindung

# WPA2-PSK (wpa=2 für WPA2 only, TKIP/CCMP für Kompatibilität)

wpa=2

wpa_passphrase=my_secret_password

wpa_key_mgmt=WPA-PSK WPA-PSK-SHA256

wpa_pairwise=TKIP CCMP

rsn_pairwise=CCMP

# Sonstiges

macaddr_acl=0 # MAC address -based authentication nicht aktivieren

auth_algs=1 # Open System Authentication

ignore_broadcast_ssid=0 # SSID frei sichtbar

wmm_enabled=0 # WMM deaktiviert wegen schlechter Verbindung

beacon_int=75 # Häufigere Beacons wegen schlechter Verbindung

max_num_sta=10 # Max Clients

country_code=DE

country3=0x49 # Indoor environment

ieee80211d=1 # Advertise country-specific parameters

access_network_type=0 # Private network

internet=1 # Network provides connectivity to the Internet

venue_group=7 # 7,1 means Private Residence

venue_type=1

ipaddr_type_availability=10 # Double NATed private IPv4 address

logger_syslog=-1

logger_syslog_level=3 # Notifications only

logger_stdout=-1

logger_stdout_level=2A couple of points in this configuration are important and shall be briefly discussed:

- The network is quite weak in some parts of my house, and so some parameters have been configured for bad network conditions. If you do not have this issue and see a strong WiFi signal all over your place, you might want to change some of the parameters or not set them to dedicated values at all. Consult the original hostapd.conf file for an explanation of all parameters or ask the AI for a suitable setup.

- my_secret_password has to be replaced with the password that you intend to secure your WiFi with, of course.

- I configured the card for Germany, and hence power output is limited to 100 mW, according to the local regulations. A configuration for the USA would allow a higher power output, but this is illegal in Europe. Furthermore, that would only bring a real benefit if your client devices also had higher output power.

- I chose the SSID Querstrasse 8 [BR] (Yes, with white space in the SSID!). If you have old clients, you might want to avoid white spaces in the SSID name.

- I set the values for venue_group, venue_type and access_network_type in order to indicate to prospective clients that this is a private (non-public) network. You might also leave these configuration options away, there would be no real impact.

In order to bring the interface wlan0 to life, we need to issue these three commands:

ip addr add 192.168.4.1/24 dev wlan0

ip link set wlan0 up

systemctl start hostapd.serviceHowever, before we can connect new clients to this WiFi, we need to set up a DHCP server on this network. The small DHCP and DNS caching server dnsmasq is the right tool to be used here.

Providing DHCP and DNS on wlan0

dnsmasq can provide both DHCP as well as cache DNS. That is very practical as it allows us for example, to have only DNS on eth0 where the FRITZ!Box already is the DHCP master, but to configure both DHCP and a caching DNS on wlan0. The following configuration file will exactly do that (it uses only a subset of the capabilities of dnsmasq):

/etc/dnsmasq.conf

# Never forward addresses in the non-routed address spaces.

bogus-priv

# If you don't want dnsmasq to read /etc/resolv.conf or any other

# file, getting its servers from this file instead (see below), then

# uncomment this.

no-resolv

# If you don't want dnsmasq to poll /etc/resolv.conf or other resolv

# files for changes and re-read them then uncomment this.

no-poll

# Add other name servers here, with domain specs if they are for

# non-public domains.

server=8.8.8.8

server=8.8.4.4

server=9.9.9.9

server=1.1.1.1

# If you want dnsmasq to listen for DHCP and DNS requests only on

# specified interfaces (and the loopback) give the name of the

# interface (eg eth0) here.

# Repeat the line for more than one interface.

interface=eth0

interface=wlan0

# If you want dnsmasq to provide only DNS service on an interface,

# configure it as shown above, and then use the following line to

# disable DHCP and TFTP on it.

no-dhcp-interface=eth0

# Uncomment this to enable the integrated DHCP server, you need

# to supply the range of addresses available for lease and optionally

# a lease time. If you have more than one network, you will need to

# repeat this for each network on which you want to supply DHCP

# service.

dhcp-range=tag:wlan0,192.168.4.10,192.168.4.254,24h

# Set the NTP time server addresses

dhcp-option=option:ntp-server,192.168.2.3

# Send Microsoft-specific option to tell windows to release the DHCP lease

# when it shuts down. Note the "i" flag, to tell dnsmasq to send the

# value as a four-byte integer - that's what Microsoft wants. See

# https://learn.microsoft.com/en-us/openspecs/windows_protocols/ms-dhcpe/4cde5ceb-4fc1-4f9a-82e9-13f6b38d930c

dhcp-option=vendor:MSFT,2,1i

# Include all files in a directory which end in .conf

conf-dir=/etc/dnsmasq.d/,*.confIn this configuration, we can see that on eth0, we will not enable DHCP (Option no-dhcp-interface=eth0). As this option is missing for wlan0, we will have DHCP active on wlan0. Furthermore, we propagate the server’s address 192.168.2.3 as NTP server. For this, the NTP service needs to be enabled, of course, otherwise that would be pointless.

While address 192.168.2.3 is not in the network of wlan0 (192.168.4.0/24), we will enable access to that network in the subsequent chapter.

dnsmasq uses the file /etc/hosts as well as upstream DNS servers for its own DNS service. The advantage of this is that – if your file /etc/hosts is maintained – you can also use the device names listed there. As pstream DNS servers from which dnsmasq gets the IP resolution, I have configured four popular ones (8.8.8.8, 8.8.4.4, 9.9.9.9, 1.1.1.1), but you could also just list the IP of your SoHo router or DNS resolver of your internet provider.

Setting the Routing Policy

Now, we must ensure that traffic from our new networks 192.168.3.0/24 and 192.168.4.0/24 can flow as intended. We have to set up the correct routing policy, and for that, we need the following commands whereby the first three commands have already been mentioned (and been executed) in one of the chapters above:

# Start interfaces wlan0

ip addr add 192.168.4.1/24 dev wlan0

ip link set wlan0 up

systemctl start hostapd.service

# Setup the NAT table for the VPNs.

iptables -t nat -F

iptables -t nat -A POSTROUTING -s 192.168.3.0/24 -o eth0 -j SNAT --to-source 192.168.2.3

iptables -t nat -A POSTROUTING -s 192.168.4.0/24 -o eth0 -j SNAT --to-source 192.168.2.3

iptables -t nat -A POSTROUTING -o tun0 -j MASQUERADE

iptables -t nat -A POSTROUTING -o tun1 -j MASQUERADE

# Add the missing routes in the other routing tables

for TABLE in Portugal Brasilien; do

ip route add 192.168.2.0/24 dev eth0 proto kernel scope link src 192.168.2.3 table ${TABLE}

ip route add 192.168.3.0/24 dev eth0 proto kernel scope link src 192.168.3.1 table ${TABLE}

ip route add 192.168.4.0/24 dev wlan0 proto kernel scope link src 192.168.4.1 table ${TABLE}

done

# Setup the MANGLE tables which shape and mark the traffic that shall use other routing tables

iptables -t mangle -F

iptables -t mangle -A PREROUTING -j CONNMARK --restore-mark

iptables -t mangle -A PREROUTING -m mark ! --mark 0 -j ACCEPT

iptables -t mangle -A PREROUTING -i eth0 -s 192.168.3.0/24 -j MARK --set-mark 1

iptables -t mangle -A PREROUTING -i wlan0 -s 192.168.4.0/24 -j MARK --set-mark 2

iptables -t mangle -A PREROUTING -j CONNMARK --save-mark

iptables -t mangle -A OUTPUT -j CONNMARK --restore-mark

iptables -t mangle -A OUTPUT -m mark ! --mark 0 -j ACCEPT

iptables -t mangle -A OUTPUT -j CONNMARK --save-mark

# Add rules for the traffic that shall branch to the new routing table

ip rule add from all fwmark 0x1 priority 5000 lookup Portugal

ip rule add from all fwmark 0x2 priority 5000 lookup BrasilienPersonally, I have these commands executed as part of a shell script that runs after powering up the Linux server and that I also use to control many other services and configurations.

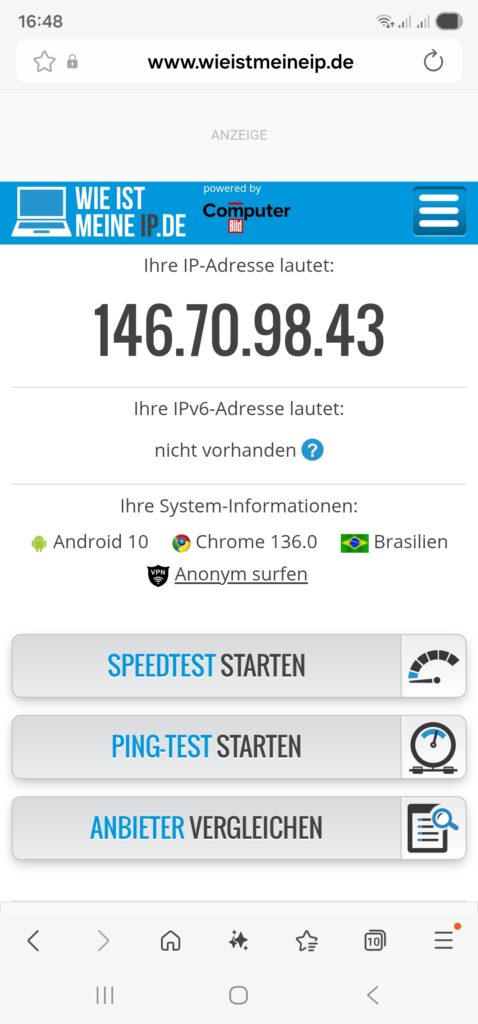

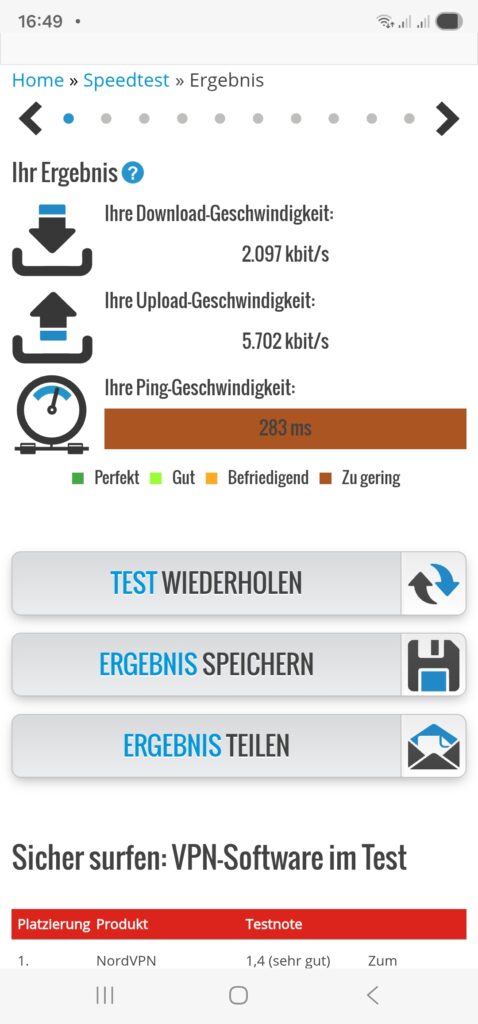

Once we have started the dnsmasq service (systemctl start dnsmasq.service) from the previous chapter and set up the routing policy correctly, we should be able to connect with a smartphone or a notebook to our new WiFi network 192.168.4.0/24 and do first tests like shown here:

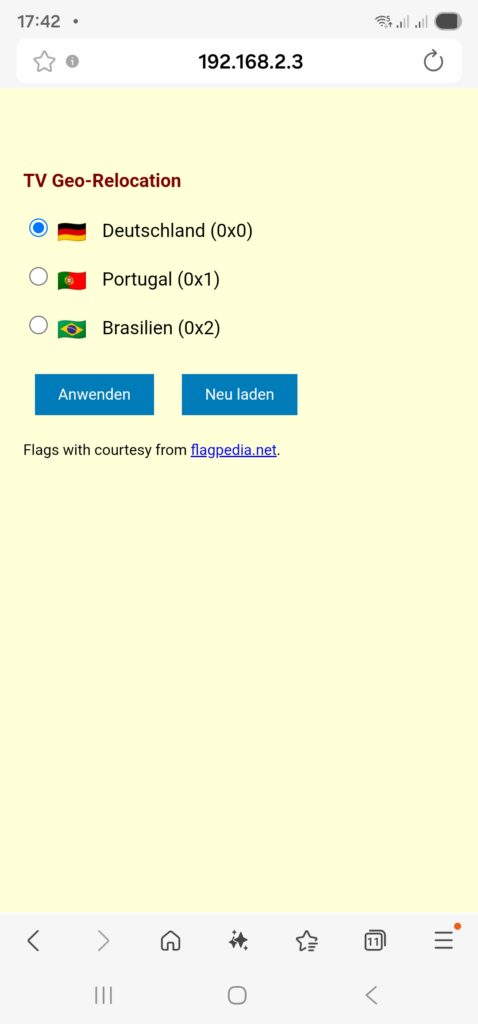

Relocating the TV Set to DE, PT, BR

As a means of convenience, we want to set up a small web page that can be accessed on our smartphone so that we can “re-locate” the TV set between the countries Germany, Portugal, and Brazil. This simple “no frills” page will serve our purpose:

relocate.php:

<!DOCTYPE HTML PUBLIC "-//W3C//DTD HTML 4.0 Transitional//EN">

<html>

<head>

<title>TV Geo-Relocation</title>

<style type="text/css">

a:link { text-decoration:underline; font-weight:normal; color:#0000FF; }

a:visited { text-decoration:underline; font-weight:normal; color:#800080; }

a:hover { text-decoration:underline; font-weight:normal; color:#909090; }

a:active { text-decoration:blink; font-weight:normal; color:#008080; }

h1 { font-family:Arial,Helvetica,sans-serif; font-size:100%; color:maroon; text-indent:0.0cm; }

hr { text-indent:0.0cm; height:3px; width:100%; text-align:left; }

p { font-family:Arial,Helvetica,sans-serif; font-size:80%; color: black; text-indent:0.0cm; }

body { font-family: Arial, sans-serif; background-color:#FFFFD8; max-width: 600px; margin: 50px auto; padding: 20px; }

.flag { width: 24px; height: 16px; vertical-align: middle; margin-right: 10px; }

.radio-group { margin: 20px 0; }

input[type="radio"] { margin-right: 5px; }

button { padding: 10px 20px; margin: 10px; background: #007cba; color: white; border: none; cursor: pointer; }

</style>

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<meta http-equiv="content-language" content="de">

<meta http-equiv="cache control" content="no-cache">

<meta http-equiv="pragma" content="no-cache">

<meta name="author" content="Gabriel Rüeck">

<meta name="date" content="2026-02-17T18:00:00+01:00">

<meta name="robots" content="noindex">

</head>

<body bgcolor="seashell">

<?php

// Setze die neue Markierung für Pakete aus 192.168.3.0/24

if ($_SERVER['REQUEST_METHOD'] === 'POST') {

$fwmark = $_POST['fwmark'] ?? '';

if (in_array($fwmark, ['0','1','2'], true)) {

shell_exec('sudo /srv/www/htdocs/tv/write_status.sh ' . escapeshellarg($fwmark));

}

}

// Hole aktuelle Markierung für Pakete aus 192.168.3.0/24

$current_mark = trim(shell_exec('sudo /srv/www/htdocs/tv/read_status.sh'));

?>

<h1>TV Geo-Relocation</h1>

<form method="POST">

<div class="radio-group">

<label>

<input type="radio" name="fwmark" value="0" <?= $current_mark === '0x0' ? 'checked' : '' ?>>

<img src="https://flagcdn.com/24x18/de.png" srcset="https://flagcdn.com/48x36/de.png 2x" class="flag" alt="🇩🇪"> Deutschland (0x0)

</label><br><br>

<label>

<input type="radio" name="fwmark" value="1" <?= $current_mark === '0x1' ? 'checked' : '' ?>>

<img src="https://flagcdn.com/24x18/pt.png" srcset="https://flagcdn.com/48x36/pt.png 2x" class="flag" alt="🇵🇹"> Portugal (0x1)

</label><br><br>

<label>

<input type="radio" name="fwmark" value="2" <?= $current_mark === '0x2' ? 'checked' : '' ?>>

<img src="https://flagcdn.com/24x18/br.png" srcset="https://flagcdn.com/48x36/br.png 2x" class="flag" alt="🇧🇷"> Brasilien (0x2)

</label>

</div>

<button type="submit">Anwenden</button>

<button type="button" onclick="location.reload()">Neu laden</button>

<p>Flags with courtesy from <a href="https://flagpedia.net" target="_blank">flagpedia.net</a>.</p>

</form>

</body>

</html>This PHP page needs to be put in a suitable directory, and you need to have web server up and running, of course (not described in this article). In my case, the file is located in /srv/www/htdocs/tv/relocate.php. In the header of the PHP file, you can see the line:

<meta name="viewport" content="width=device-width, initial-scale=1.0">This line adapts the width of the page when being called on a smartphone so that it appears with a reasonable scaling on the smartphone screen. Furthermore, as you can see, this web page calls two shell scripts, and those are:

read_status.sh

#! /bin/bash

#

# This script will be executed as root by the PHP scipt relocate.php

#

# Gabriel Rüeck 15.02.2026

#

/usr/sbin/iptables -t mangle --line-numbers -L PREROUTING -n -v | fgrep "eth0" | sed -r 's/^.*MARK (set|and) (0x[[:xdigit:]]+)/\2/'write_status.sh

#! /bin/bash

#

# This script will be executed as root by the PHP scipt relocate.php

#

# Gabriel Rüeck 15.02.2026

#

LINE_NUMBER=$(/usr/sbin/iptables -t mangle --line-numbers -L PREROUTING -n -v | fgrep "eth0" | sed 's/^\([[:digit:]]\+\) \+.*/\1/')

MARK=${1}

/usr/sbin/iptables -t mangle -R PREROUTING ${LINE_NUMBER} -i eth0 -s 192.168.3.0/24 -j MARK --set-mark ${MARK}read_status.sh reads the corresponding routing entry from the mangle table [6], and this information enables the page relocate.php to display the correct country to which the traffic of the TV set if channeled when relocate.php is called initially. write_status.sh is used to modify the correct entry in the mangle table and channel the traffic to the country select on the PHP page. Both read_status.sh as well as write_status.sh need to be executed as root, and therefore, they need to be listed in the sudoers file structure. [7], [8] explain the correct proceeding. In our case, the file /etc/sudoers.d/wwwrun has been set up with the access rights 0440, and this file should have the content:

wwwrun ALL=(root) NOPASSWD: /srv/www/htdocs/tv/read_status.sh

wwwrun ALL=(root) NOPASSWD: /srv/www/htdocs/tv/write_status.shOf course, we do not want arbitrary internet users to change the geo-location of the TV set, and therefore, the access to the PHP page relocate.php must be restricted. An easy, but not entirely secure method is to limit access to this page to the local networks. This can be done in the webserver configuration file (in my case: /etc/apache2/httpd.conf.local) where we add:

# TV Configuration

<Directory /srv/www/htdocs/tv>

Require local

Require ip 192.168.0.0/16 127.0.0.0/8 ::1/128 fd00:0:0::/48

</Directory>This will restrict access to local networks. But this is entirely fool-proof against advanced hacking attacks (see [9] as an example).

The PHP page should ultimately look like this on a smartphone:

Shortcomings

During experiments with this setup, I have come across the following shortcoming:

- On my TV set, a Samsung GQ75Q80, I was able to configure a static IPv4 address. However, it seemed to me that the TV was still getting a dynamic IPv6 address from the FRITZ!Box. I suppose that if one really wants to isolate the TV set from the SoHo network, it would be necessary to use a separate physical network. Luckily, this did not impact the possibility to watch TV with the Portuguese TV app.

Conclusion

With Policy Routing and commercial VPN connections, it is possible to create additional networks in a SoHo environment that will allow client devices to behave as if they were in another country. Basically, you could also achieve that with a VPN connection on the device (smartphone, etc.) itself; however, you then might have access to other services in your SoHo network (printer, etc.). And in the case of a TV set, I am not even sure if there are models that can build up VPN connections themselves. However, the setup described here also shows that it is not trivial as several services need to be configured and act together in a meaningful way.

Sources

- [1] = Setting up Client VPNs, Policy Routing

- [2] = Setting up Dual Stack VPNs

- [3] = iptables – Port forwarding over OpenVpn

- [4] = Routing for multiple uplinks/providers

- [5] = Two Default Gateways on One System

- [6] = Netfilter

- [7] = Classic SysAdmin: Configuring the Linux Sudoers File

- [8] = How To Edit the Sudoers File Safely

- [9] = Forcepoint Research Report: Attacking the internal network from the public Internet using a browser as a proxy

Protected: Road Trip nach Portugal (II)

Learnings from Dynamic Electricity Pricing

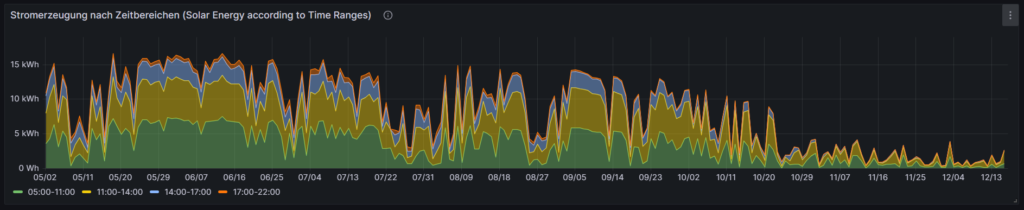

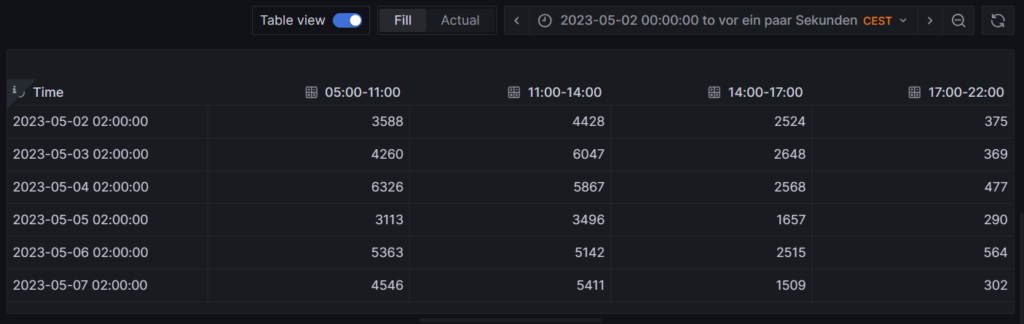

Executive Summary

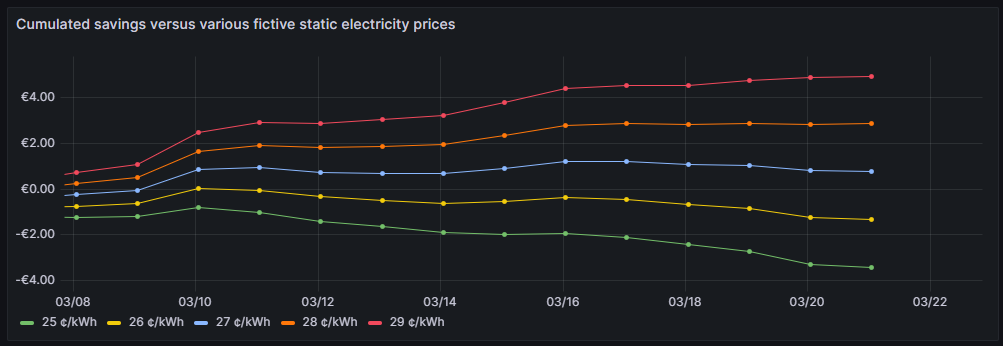

Unlike previous articles, I chose to split this new blog post into two parts. In the first part (Findings), I will elaborate on my findings as a consumer with dynamic electricity prices (day-ahead market) in connection with a small solar electricity generation unit. We will look at various visualizations that help to understand the impact and to gain some more insight in how dynamic electricity prices can be useful. In the second part (Annex: Technical Details), technically interested folks will find the respective queries and sample data with which they can replicate the findings or even do their own examination with the sample data. As in previous articles, Grafana is used in connection with a MariaDB database.

Background

On 1st of March 2024, I switched from a traditional electricity provider to one with dynamic day-ahead pricing, in my case, Tibber. I wanted to try this contractual model and see if I could successfully manage to shift chunks of high electricity consumption such as:

- … loading the battery-electric vehicle (BEV) or the plug-in hybrid car (PHEV)

- … washing clothes

- … drying clothes in the electric dryer

to those times of the day when the electricity price is lower. I also wanted to see if that makes economic sense for me. And, after all, it is fun to play around with data and gain new insights.

As my electricity supplier, I had chosen Tibber because they were the first one I got to know and they offer a device called Pulse which can connect a digital electricity meter to their infrastructure for metering and billing purposes. Furthermore, they do have an API [1] which allows me to read out my own data; that was very important for me. I understand that meanwhile, there are several providers Tibber that have similar models and comparable features.

Findings

Price and consumption patterns

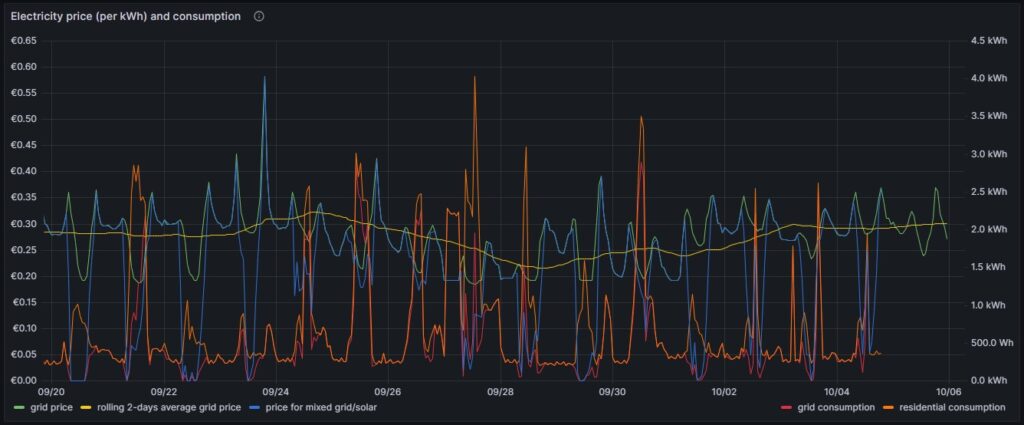

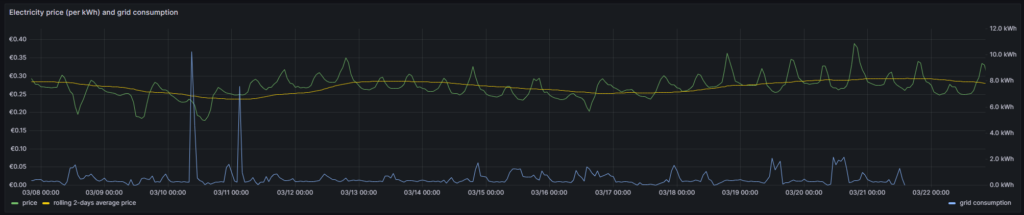

The graph below shows five curves and is an upgraded version of the respective graph in [6] visualizing data over two weeks:

As in [6], the green curve is the hourly price of the day-ahead market. It is well recognizable that the price has peaks in the evening and in the morning at breakfast time when residential consumption is high, but little solar energy is available in Germany. The yellow curve is a two-days floating average and shows that the average price is not below a fixed rate electricity contract, an important point that we shall discuss later. The orange curve is the consumption of my house; the higher peaks indicate times when I charged one of the cars using an ICCB (one phase, current: 10 A). The red curve shows the consumption of the grid. During the day, when there is sunshine, the red curve lies below the orange curve as a part of the overall consumed electricity comes from the solar panels. At nighttime, the red curve will exactly lie on the orange curve as there is no solar electricity generation. The blue curve shows the average electricity price per kWh based on a mixed calculation of the grid price and zero for my own solar electricity generation. One might argue whether zero is an adequate assumption as also solar panels cost money, but as I do have them installed already, I consider them to be sunk cost now. The blue curve show an interesting behavior: When my consumption is low, the blue curve shows a small average price. When the consumption of the house is less than the power that is generated by the solar panels, then the blue curve is flat zero. When, however, I consume a lot of power, then, the blue curve approaches the green curve. At nighttime when there is no solar energy generation, the blue curve lies identical with the green curve.

My goal is to consume more electricity in the times when either the green curve points to a low electricity price or when there is enough electricity generated by the solar panels so that my average price that I pay (blue curve) is reasonable low. This also explains why I mostly the ICCB (one phase, 10 A) to charge the cars as then, I still can get a good average price (although charging takes a lot more time then). I think that by looking at the curves, I have adapted my consumption pattern well to the varying electricity price.

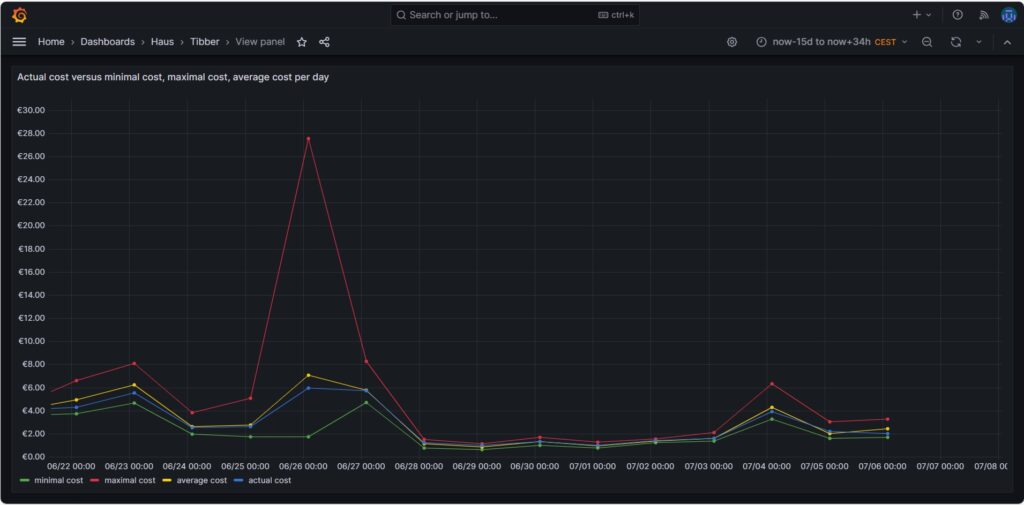

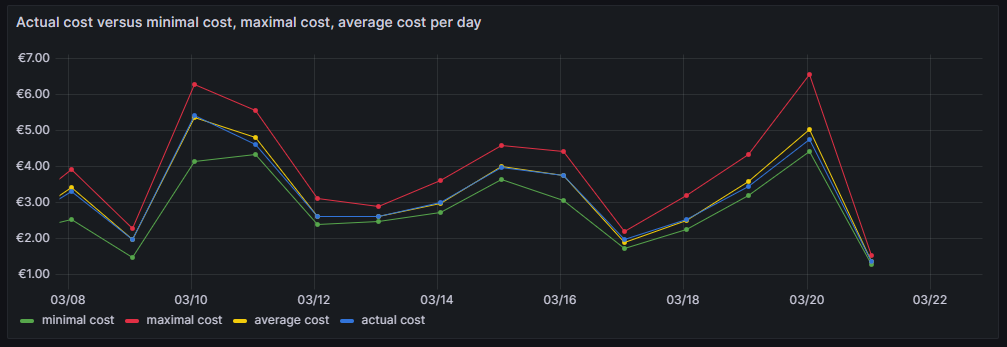

Actual cost, minimal cost, maximal cost, average cost per day

The graph above shows four curves. The green curve is the cost that I would have incurred if I had bought all the electricity of the respective day in the hour of the cheapest electricity price. This would only be possible if I had a battery that could bridge the remaining 23 hours of the day (and probably some hours more as the cheapest hour of the following day is not necessarily 00:00-01:00). The red curve is the cost that I would have incurred if I had bought all the electricity of the respective day in the hour of the most expensive electricity price. The yellow curve is the average cost of the respective day, the multiplication of the average price per kWh of that day by my consumed energy. The blue curve is the real cost that I have paid. If the blue curve is between the yellow curve and the green curve, then this is very good, and I have succeeded in shifting my consumption versus the hours with cheaper electricity. Without a battery, it is almost impossible to come very close to the green line.

The graph above shows one peculiarity as on 2024-06-26, there were some hours with an extremely high price, but that was due to a communication error that decoupled the auction in Germany from the rest of Europe [7], clearly (and hopefully) a one-time event.

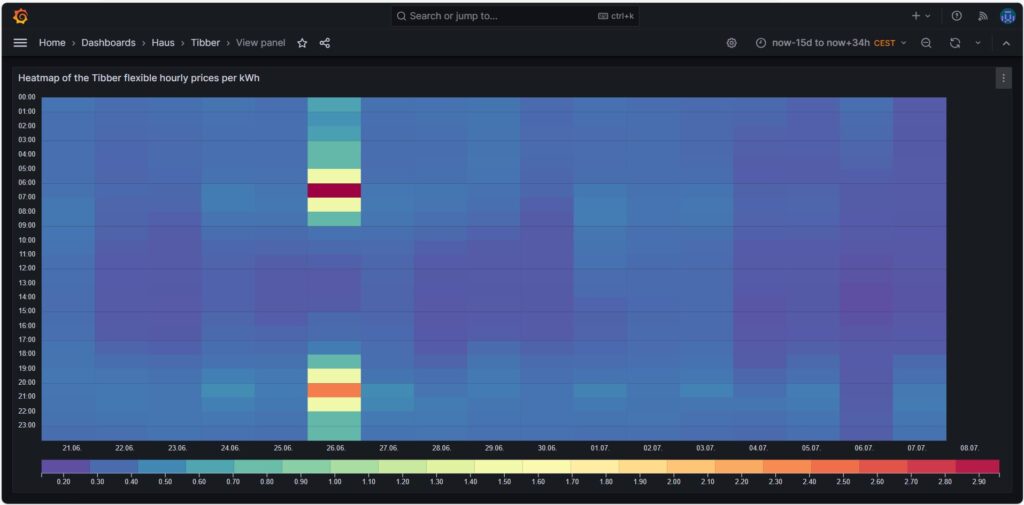

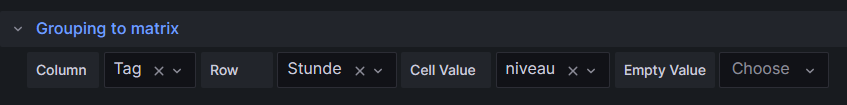

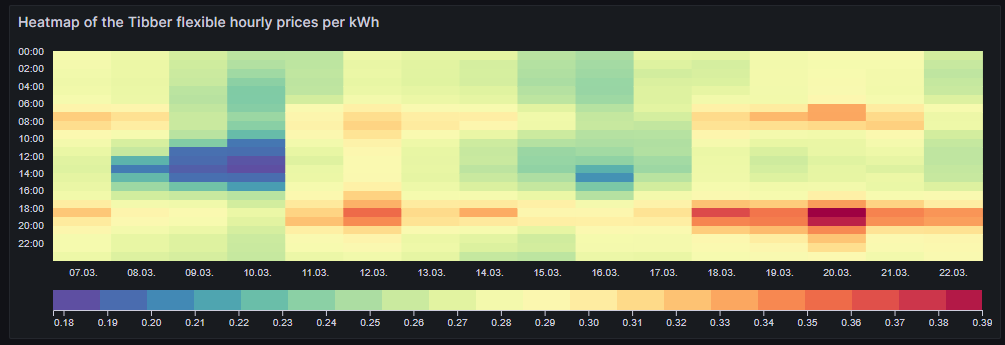

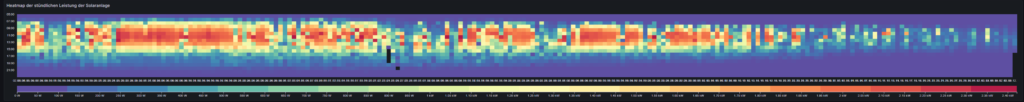

Price heatmap of the hourly prices per kWh

The one-time event with unusually high prices [7] is well visible in the price heatmap that was already introduced and explained in [6]. The one-time event overshadows all price fluctuations in the rest of the week.

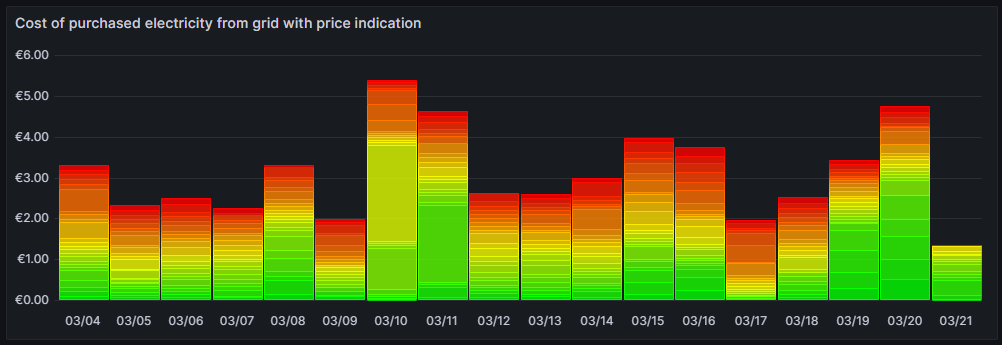

Cost and amount of purchased electricity from grid with price indication

The first graph shown below was already introduced and explained in [6] and shows the cost of purchased electricity from the grid in 24 rectangles, whereby each rectangle represents an hour. The order of the rectangles starts with the most expensive hour at the top and ends with the least expensive hour at the bottom. The larger the rectangle, the more money has been spent in the respective hour. I already explained in [6] that the goal should be – if electricity has to be purchased from the grid at all – the purchases ideally should happen in times when the price is low. In the graph those are the rectangles with green or at maximum yellow color. The rectangles with orange and red color indicate purchases during times of a high electricity price. In reality, one will not be able to avoid purchases at times of a high price completely. My experience is that in summer, I let the air conditioning systems run also in the evening hours when the electricity price is higher, just to keep the house at reasonable temperatures inside. Similarly, in spring and autumn, I opt for leaving the central heating switched off and try to heat the rooms in which I am usually with the air conditioning systems (in heating mode) as in spring and autumn, the difference in temperature between outside and inside is not too high, and the air conditioning systems will have a high efficiency.

Then, for 13-Oct (10/13), one can see that in fact I bought substantial electricity at high prices. The reason for that was that I returned home at night and had to re-charge the car for the next day. So, one cannot always avoid purchasing electricity at high prices.

The next chart offers another view on the same topic. Rather than looking at the cost of the purchased electricity, we look at the amount of purchased electricity. This offers an interesting insight. Except for 13-Oct, the amount of electricity that is purchased at hours with a high price, is less than 5 kWh. This means that with an energy-storage system (ESS), it should be possible to charge a battery of around 5 kWh during times of a lower electricity price and to discharge the battery and supply the house during times of a high electricity price so that ideally, there would be no or only minimally sized orange and red rectangles. Of course, this only makes sense if the low prices and the high prices per day differ substantially as the ESS will have losses of 10%…20%. And in fact, this is exactly an idea that I am planning to try out within the next months (so stay tuned).

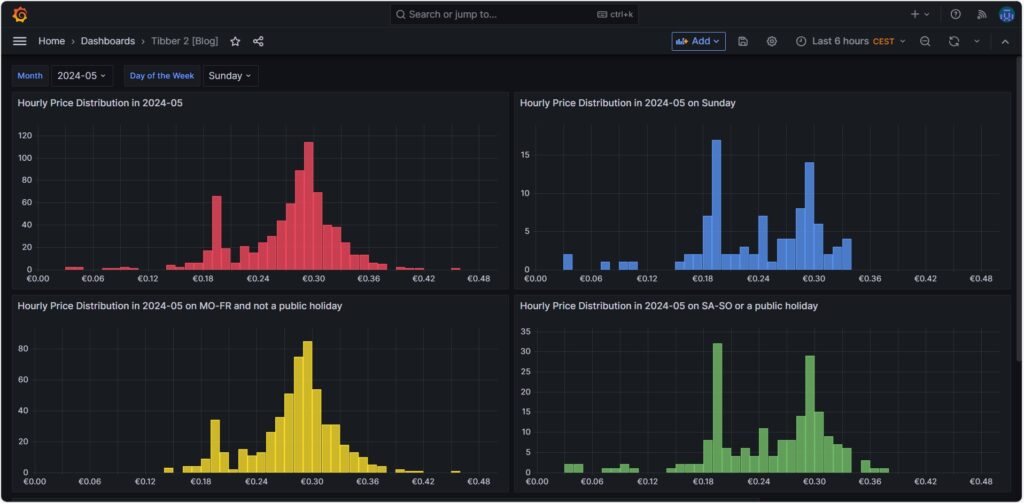

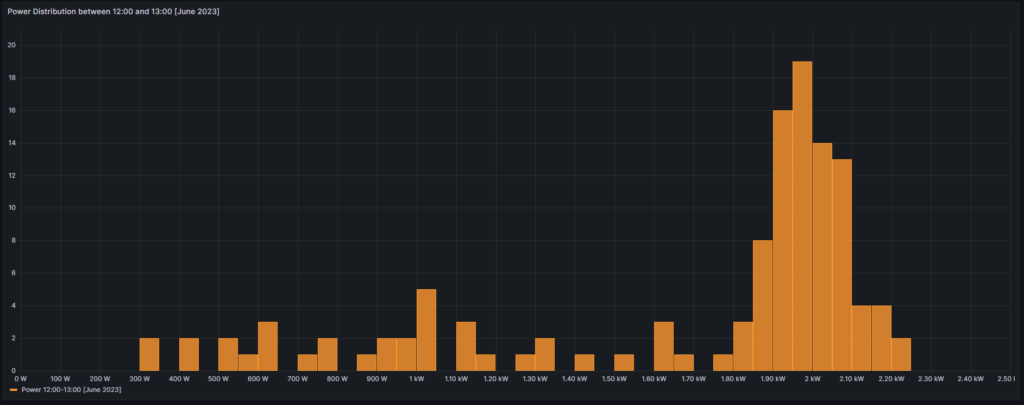

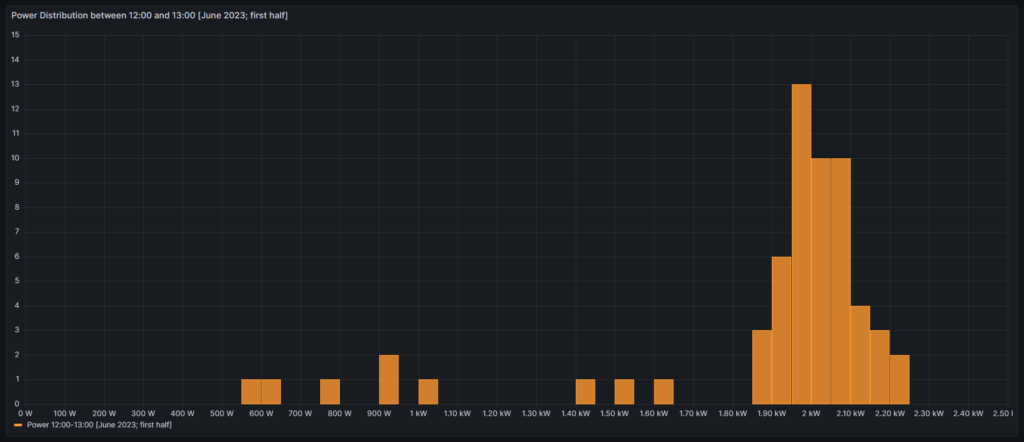

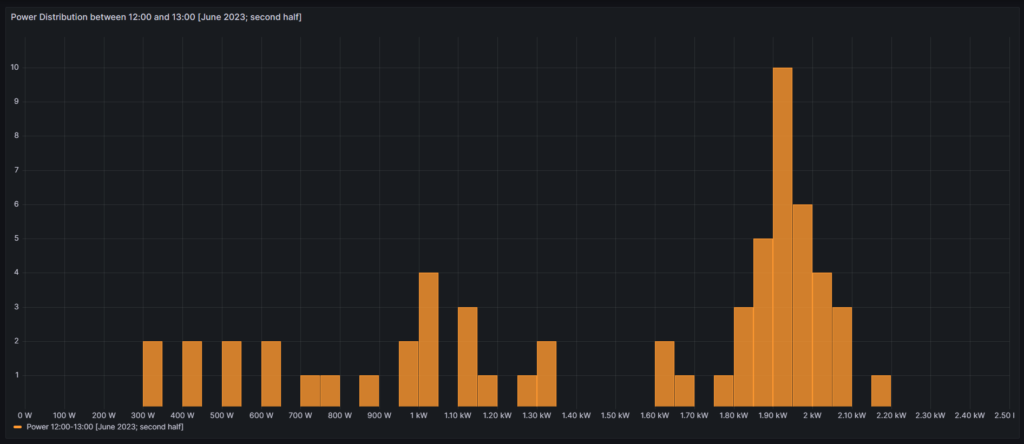

Hourly price distribution

Another finding which was interesting for me was that the price of electricity is not necessarily cheaper on the weekend (at least not in May 2024). One might assume that because industry consumption is low on the weekend, the price of electricity is lower. And that is true to some parts, as the lowest prices that occur within a whole month tend to happen on the weekend (green histogram). We can also see a peak at around 19 ¢/kWh in the green histogram which is much lower in the yellow histogram. However, there are also many hours with prices that are similar to the prices that we have during the week (yellow histogram).

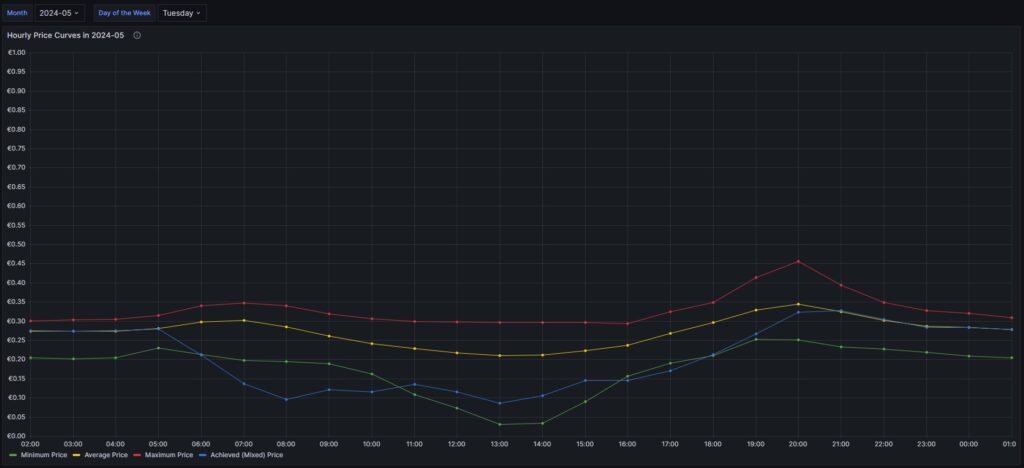

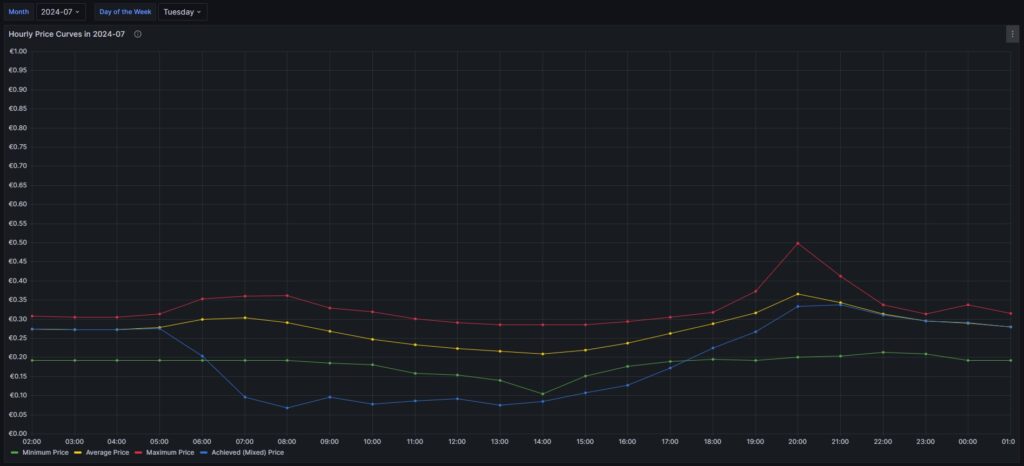

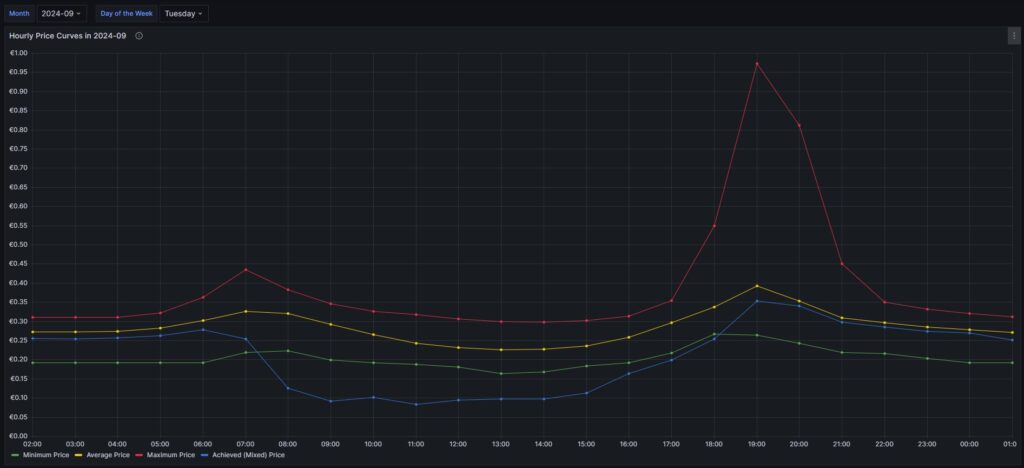

Monthly hourly price curves

The following three graphs show the hourly price curves over the hour of the day per month; the selected Day of the Week is irrelevant for this graph as only the variable Month is used.

The green curve shows the minimum hourly price per kWh that occurred in the selected month. The red curve shows the maximum hourly price per kWh that occurred in the selected month. The yellow curve shows the average hourly price per kWh that occurred in the selected month. The blue curve shows the average hourly price per kWh that I have experienced in the selected month, calculating my own solar electricity generation as “free of cost” (like the blue curve of the graph in chapter Price and consumption patterns).

There are a couple of interesting findings that can easily be seen in the curves:

- Prices tend to be higher in the early morning (06:00-08:00) and in the evening (18:00-21:00). The evening price peak is higher than the one in the morning.

- The price gap in the morning, but especially in the evening hours between the minimum and the maximum hourly price seems to increase from May to September. I do not yet know if this is because of the season, or if the market has become more volatile in general.

- In my personal situation (the solar modules face East), I am not bothered by the price peak in the morning, as I seem to have already quite a good power generation (blue curve descending in the morning). That is, however, different in the evening; then, I am personally affected by the price peak.

For me, this is an indication that it might make sense to install a battery and charge it during the day and discharge it especially in the evening hours (18:00-21:00) when the price peaks.

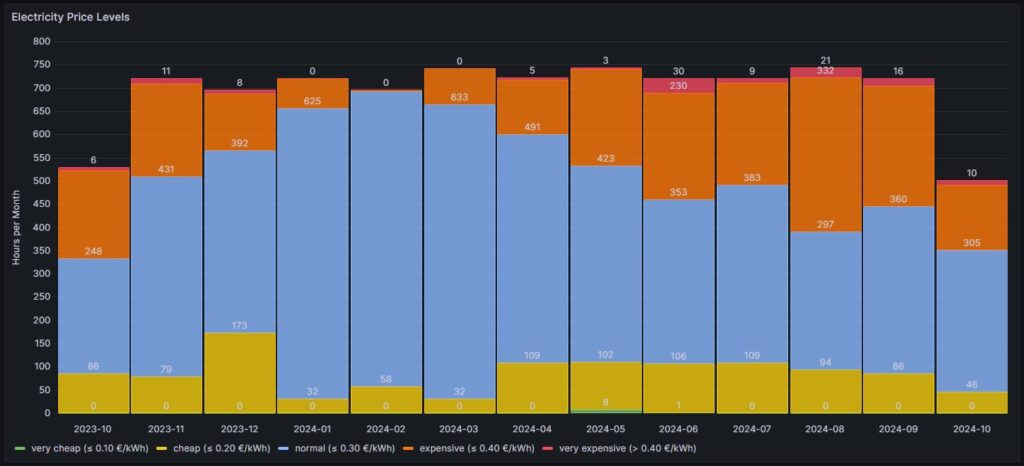

Electricity price levels

In order to look into the hourly prices from a different perspective, I have grouped the hourly prices into five categories:

- Price per kWh ≤10 ¢/kWh (green block), labelled as very cheap

- Price per kWh >10 ¢/kWh and ≤20 ¢/kWh (yellow block), labelled as cheap

- Price per kWh >20 ¢/kWh and ≤30 ¢/kWh (blue block), labelled as normal

- Price per kWh >30 ¢/kWh and ≤40 ¢/kWh (orange block), labelled as expensive

- Price per kWh >40 ¢/kWh (red block), labelled as very expensive

For each month (except for Oct 2023, Oct 2024) the sum of the blocks in different colors are the hours of the respective month; depending on the number of days per month, the number of hours vary, of course. Larger blocks correspond to more hours in the respective price category. Do not pay attention to the numbers listed in the graph in white color as some numbers are missing. I will give a numerical statistic below the graph.

| Month | very cheap (≤ 10 ¢/kWh) | cheap (≤ 20 ¢/kWh) | normal (≤ 30 ¢/kWh) | expensive (≤ 40 ¢/kWh) | very expensive (> 40 ¢/kWh) |

| 2023-10 | 0 | 86 | 248 | 189 | 6 |

| 2023-11 | 0 | 79 | 431 | 199 | 11 |

| 2023-12 | 0 | 173 | 392 | 123 | 8 |

| 2024-01 | 0 | 32 | 625 | 63 | 0 |

| 2024-02 | 0 | 58 | 635 | 3 | 0 |

| 2024-03 | 0 | 32 | 633 | 78 | 0 |

| 2024-04 | 0 | 109 | 491 | 117 | 5 |

| 2024-05 | 8 | 102 | 423 | 208 | 3 |

| 2024-06 | 1 | 106 | 353 | 230 | 30 |

| 2024-07 | 0 | 109 | 383 | 219 | 9 |

| 2024-08 | 0 | 94 | 297 | 332 | 21 |

| 2024-09 | 0 | 86 | 360 | 258 | 16 |

| 2024-10 | 0 | 46 | 305 | 141 | 10 |

Before I had generated this graph and the ones of the previous chapter, my initial assumption had been that in summer, the prices would go down compared to winter. And to some part, this is true. There is a higher number of hours in the category “cheap” (yellow block). However, the category “expensive” (orange block) increases even more in summer. And even the category “very expensive” (red block) becomes noticeable whereas the category “very cheap” (green block) does not manifest itself, not even in summer. I do not have an explanation for this; my assumption though is that maybe in winter, some fossil power stations are active that are switched off in summer and that therefore, the price is much more susceptible to the change in the electricity offer over the day. I suspect that during the day, there is mostly enough solar electricity generation, and in the evening, gas power stations need to be started that then determine the price level and lead to increased prices. However, I do not have any statistics underpinning this assumption.

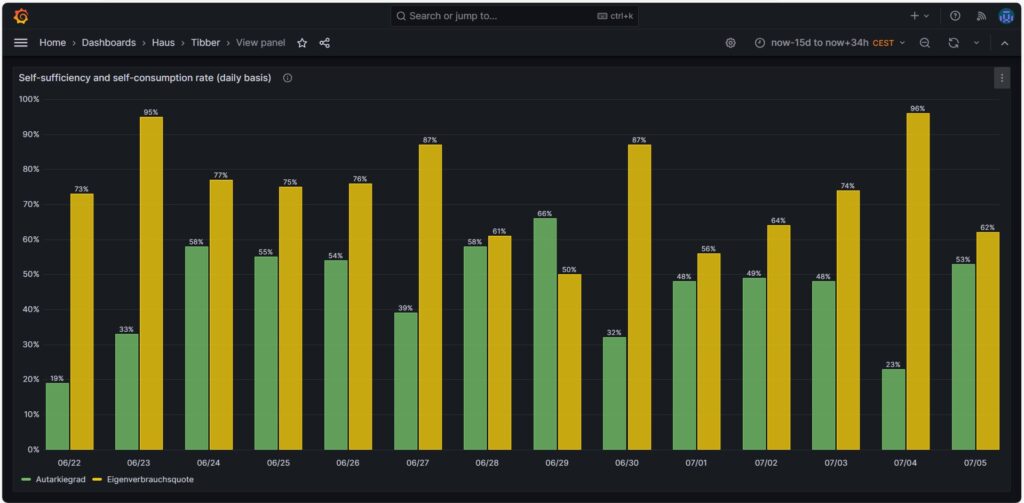

Self-sufficiency (Autarkie) and sef-consumption (Eigenverbrauch)

I am not much of a friend of looking at the values of self-sufficiency (de: Autarkie) and self-consumption (de: Eigenverbrauch), and this is for the following reasons:

- If you do not live in a place where an electricity grid is not available or where the grid is very unreliable, it does not make sense to strive for complete self-sufficiency. It is still economically best if you use your electricity during the day and consume electricity from the grid at night. Similarly, you will probably need to buy electricity from the grid in winter, at least in Central or Northern Europe as in winter, the output from your own solar electricity generation is usually insufficient to power the consumption of your house.

- The value for self-consumption varies strongly with your consumption pattern and with the solar intensity on the respective day. The only conclusion that you can draw from observing the self-consumption values over a longer time frame is:

- If your values are constantly low, then you probably have spent into too large of a solar system.

- If your values are constantly high or 100%, then it might make sense to add some more solar panels to your system.

Nevertheless, for the sake of completion, here are some example values from July of this year.

Conclusion

- The field of dynamic electricity pricing is technically very interesting and certainly helps to sharpen awareness for the need to consume electricity precisely then, when there is a lot of it available.

- Economically, having a dynamic electricity price can make sense under certain conditions. I side with [8] when I say:

- For a household without an electric car or another large electricity consumer, it does not make sense to use dynamic electricity pricing. The consumption of the washing machine, the dryer, and the dishwasher are not large enough to really draw a benefit.

- For a household with an electric car that has a large battery (e.g., some 80+ kWh, unfortunately not my use case), it makes sense then when you have the flexibility to charge the car during times with a low electricity price.

Annex: Technical Details

Preconditions

In order to use the approach described below, you should:

- … have access to a Linux machine or account

- … have a MySQL or MariaDB database server installed, configured, up and running

- … have the package Grafana [2] installed, configured, up and running

- … have the package Node-RED [3] installed, configured, up and running

- … have access to the data of your own electricity consumption and pricing information of your supplier or use the dataset linked below in this blog

- … have some basic knowledge of how to operate in a Linux environment and some basic understanding of shell scripts

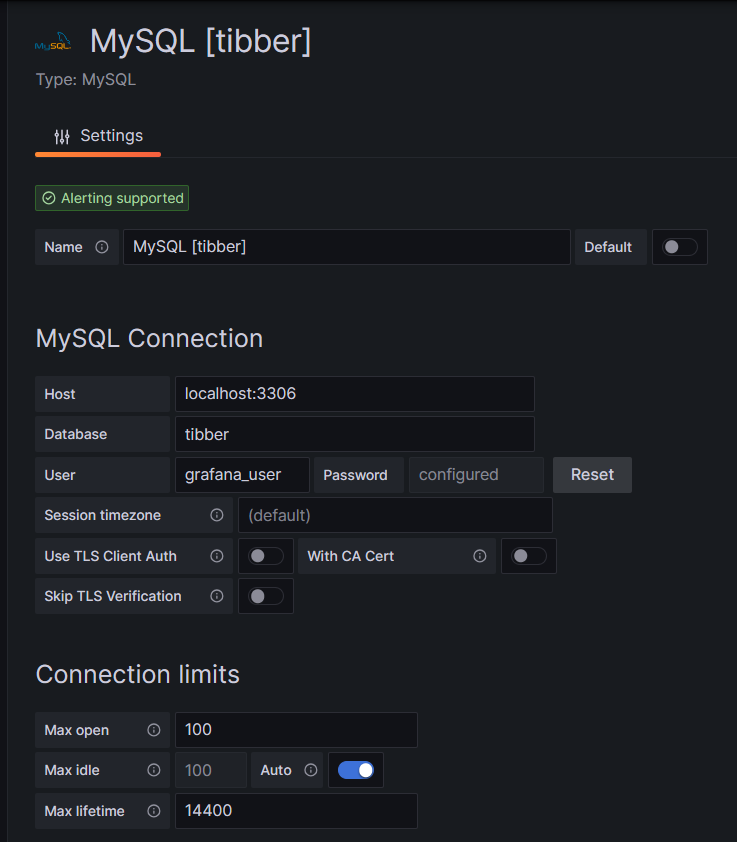

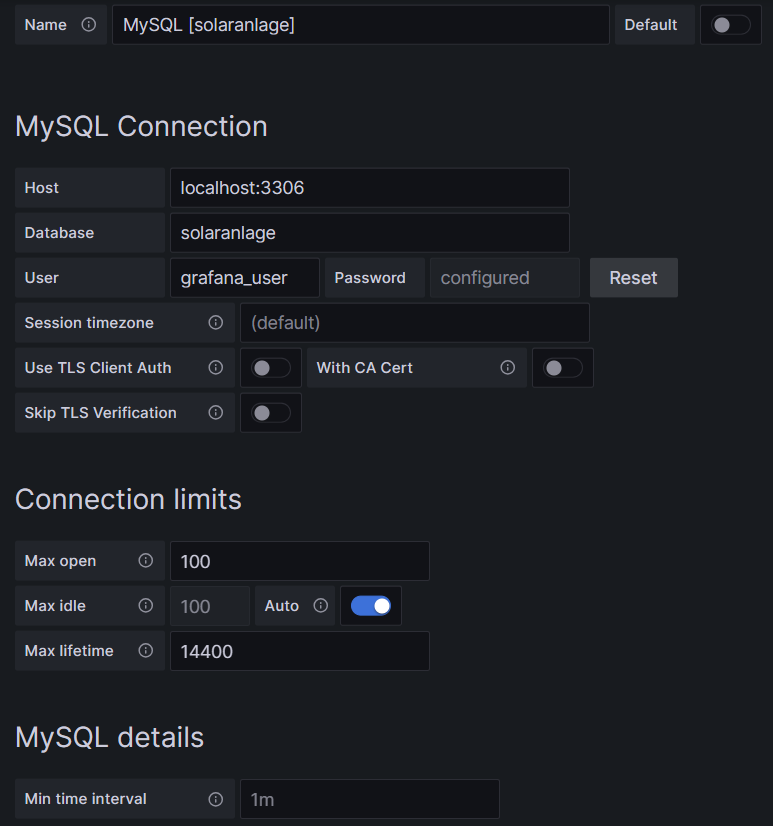

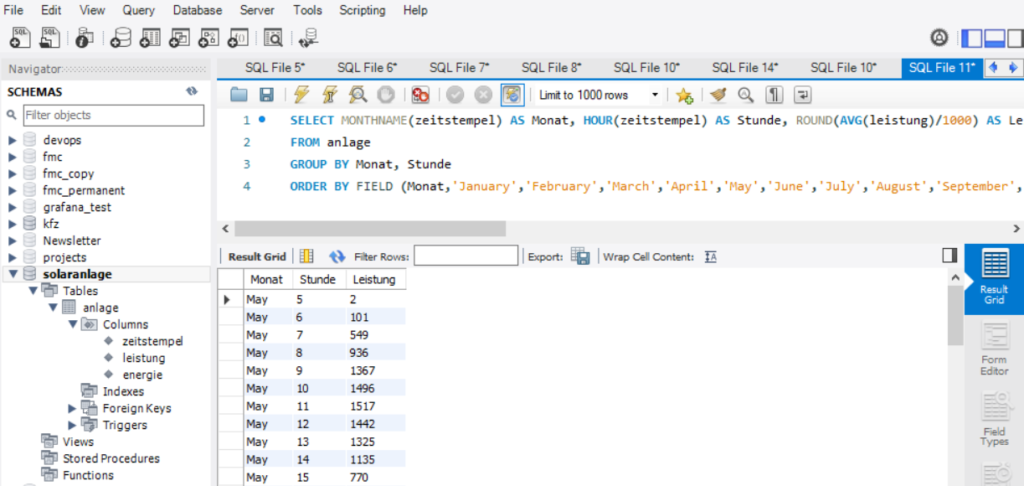

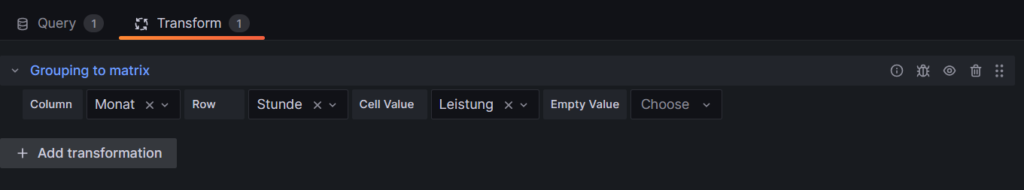

- … have read and understood the previous blog post [6], especially the part on how to connect Grafana to the MySQL or MariaDB database server

The Database

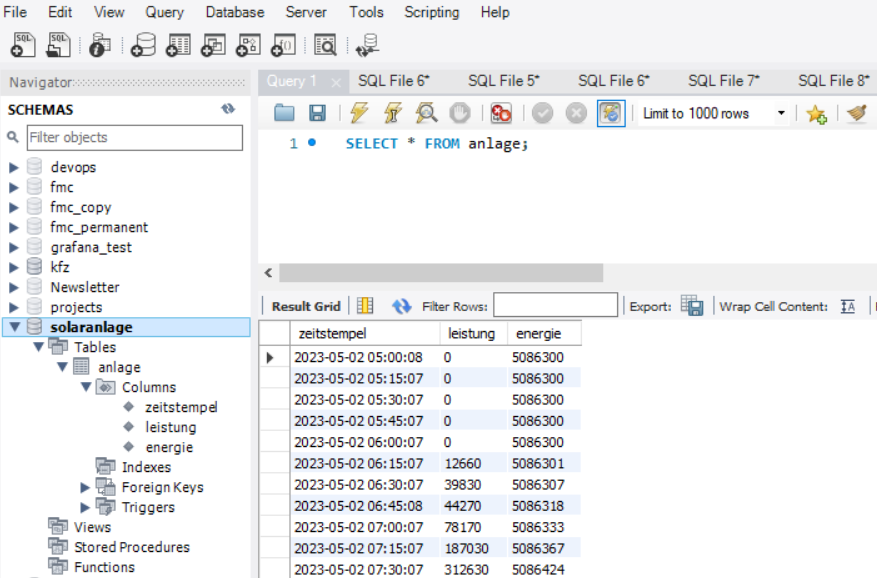

The base for the following visualizations is a fully populated MariaDB database with the following structure:

# Datenbank für Analysen mit Tibber

# V2.0; 2024-09-12, Gabriel Rüeck <gabriel@rueeck.de>, <gabriel@caipirinha.spdns.org>

# Delete existing databases

REVOKE ALL ON tibber.* FROM 'gabriel';

DROP DATABASE tibber;

# Create a new database

CREATE DATABASE tibber DEFAULT CHARSET utf8mb4 COLLATE utf8mb4_general_ci;

GRANT ALL ON tibber.* TO 'gabriel';

USE tibber;

SET default_storage_engine=Aria;

CREATE TABLE preise (uid INT UNSIGNED NOT NULL PRIMARY KEY AUTO_INCREMENT,\

zeitstempel DATETIME NOT NULL UNIQUE,\

preis DECIMAL(5,4),\

niveau ENUM('VERY_CHEAP','CHEAP','NORMAL','EXPENSIVE','VERY_EXPENSIVE'));

CREATE TABLE verbrauch (uid INT UNSIGNED NOT NULL PRIMARY KEY AUTO_INCREMENT,\

zeitstempel DATETIME NOT NULL UNIQUE,\

energie DECIMAL(5,3),\

kosten DECIMAL(5,4));

CREATE TABLE zaehler (uid INT UNSIGNED NOT NULL PRIMARY KEY AUTO_INCREMENT,\

zeitstempel TIMESTAMP NOT NULL DEFAULT UTC_TIMESTAMP,\

bezug DECIMAL(9,3) DEFAULT NULL,\

erzeugung DECIMAL(9,3) DEFAULT NULL,\

einspeisung DECIMAL(9,3) DEFAULT NULL);

Data Acquisition

Data for the MariaDB database is acquired with two different methods.

The tables preise and verbrauch are populated with the following bash script. You will need a valid API token of your Tibber account [4]; in this script, you need to replace _API_TOKEN with your personal API token ([4]) and _HOME_ID with your personal Home ID ([1]). The script further assumes that the user gabriel can login to the MySQL database without further authentication; this can be achieved by writing the MySQL login information in the file ~/.my.cnf.

#!/bin/bash

#

# Dieses Skript liest Daten für die Tibber-Datenbank und speichert das Ergebnis in einer MySQL-Datenbank ab.

# Das Skript wird einmal pro Tag aufgerufen.

#

# V2.0; 2024-09-12, Gabriel Rüeck <gabriel@rueeck.de>, <gabriel@caipirinha.spdns.org>

#

# CONSTANTS

declare -r MYSQL_DATABASE='tibber'

declare -r MYSQL_SERVER='localhost'

declare -r MYSQL_USER='gabriel'

declare -r TIBBER_API_TOKEN='_API_TOKEN'

declare -r TIBBER_API_URL='https://api.tibber.com/v1-beta/gql'

declare -r TIBBER_HOME_ID='_HOME_ID'

# VARIABLES

# PROGRAM

# Read price information for tomorrow

curl -s -S -H "Authorization: Bearer ${TIBBER_API_TOKEN}" -H "Content-Type: application/json" -X POST -d '{ "query": "{viewer {home (id: \"'"${TIBBER_HOME_ID}"'\") {currentSubscription {priceInfo {tomorrow {total startsAt level }}}}}}" }' "${TIBBER_API_URL}" | jq -r '.data.viewer.home.currentSubscription.priceInfo.tomorrow[] | .total, .startsAt, .level' | while read cost; do

read LINE

read level

timestamp=$(echo "${LINE%%+*}" | tr 'T' ' ')

# Determine timezone offset and store the UTC datetime in the database

offset="${LINE:23}"

mysql --default-character-set=utf8mb4 -B -N -r -D "${MYSQL_DATABASE}" -h ${MYSQL_SERVER} -u ${MYSQL_USER} -e "INSERT INTO preise (zeitstempel,preis,niveau) VALUES (DATE_SUB(\"${timestamp}\",INTERVAL \"${offset}\" HOUR_MINUTE),${cost},\"${level}\");"

done

# Read consumption information from the past 24 hours

curl -s -S -H "Authorization: Bearer ${TIBBER_API_TOKEN}" -H "Content-Type: application/json" -X POST -d '{ "query": "{viewer {home (id: \"'"${TIBBER_HOME_ID}"'\") {consumption (resolution: HOURLY, last: 24) {nodes {from to cost consumption}}}}}" }' "${TIBBER_API_URL}" | jq -r '.data.viewer.home.consumption.nodes[] | .from, .consumption, .cost' | while read LINE; do

read consumption

read cost

timestamp=$(echo "${LINE%%+*}" | tr 'T' ' ')

# Determine timezone offset and store the UTC datetime in the database

offset="${LINE:23}"

mysql --default-character-set=utf8mb4 -B -N -r -D "${MYSQL_DATABASE}" -h ${MYSQL_SERVER} -u ${MYSQL_USER} -e "INSERT INTO verbrauch (zeitstempel,energie,kosten) VALUES (DATE_SUB(\"${timestamp}\",INTERVAL \"${offset}\" HOUR_MINUTE),${consumption},${cost});"

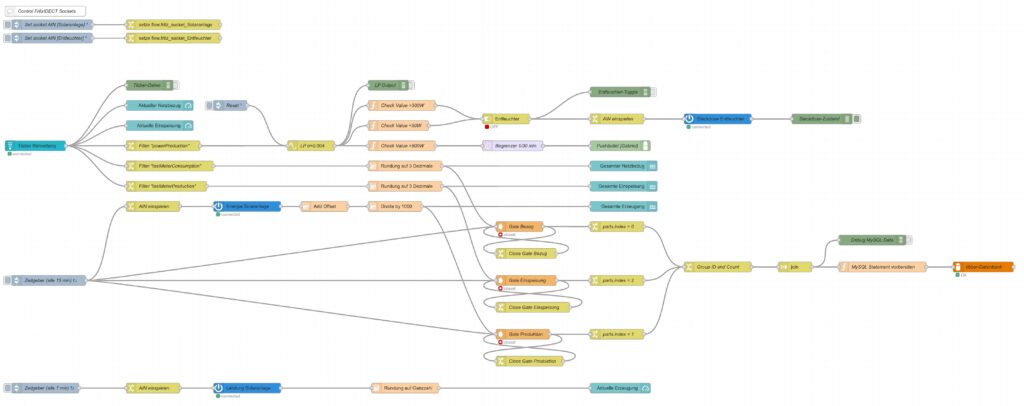

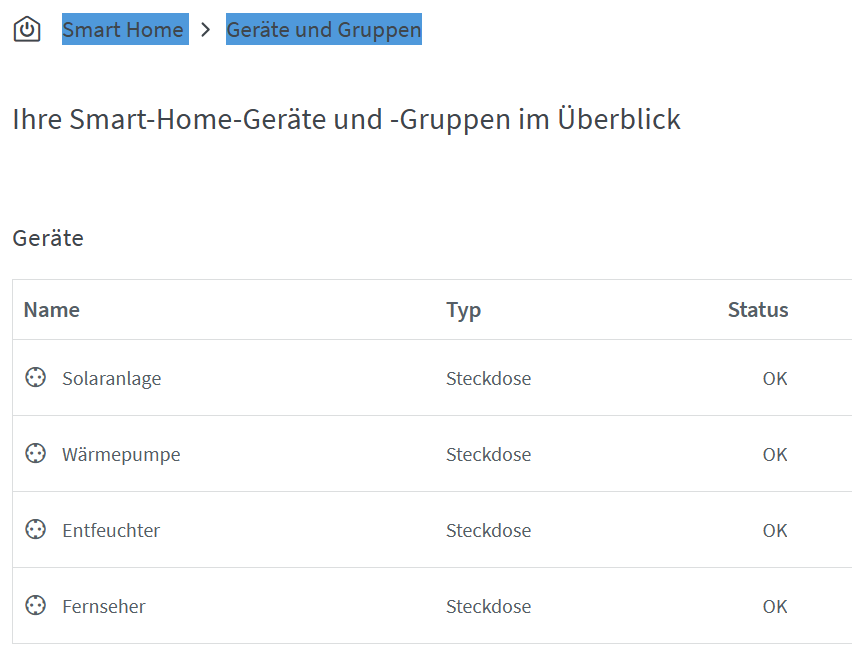

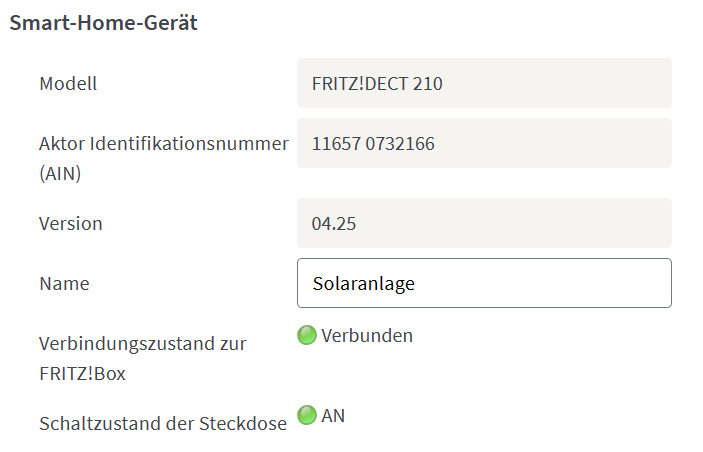

doneThe table zaehler is populated via a Node-RED flow that reads data from the Tibber API [4] as well as from the AVM socket FRITZ!DECT 210 and stores new values for the columns bezug, erzeugung, einspeisung every 15 minutes. I used a Node-RED flow for this because otherwise, I would have to program code for the access of the Tibber API [4] and include code [5] for the AVM socket FRITZ!DECT 210. However, I do plan to enlarge the functionality later with an Energy Storage System (ESS) and a battery, and so Node-RED seemed suitable to me. The flow is shown here; it contains some additional nodes apart from the now needed functionality, and it is still in development:

I have decided not to publish the JSON code here as I am not yet entirely familiar with Node-RED [3] and would not know how to remove my personal tokens from the JSON code.

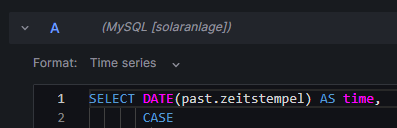

Queries for the Graphs

Price and Consumption Patterns

The graph is based on four queries that correspond to the green and yellow, to the red, to the orange and to the blue curve:

SELECT zeitstempel,

preis AS 'grid price',

AVG(preis) OVER (ORDER BY zeitstempel ROWS BETWEEN 47 PRECEDING AND CURRENT ROW) as 'rolling 2-days average grid price'

FROM preise;

SELECT zeitstempel, energie AS 'grid consumption' FROM verbrauch;

SELECT alt.zeitstempel,

(neu.bezug-alt.bezug+neu.erzeugung-alt.erzeugung-neu.einspeisung+alt.einspeisung) AS 'residential consumption'

FROM zaehler AS neu JOIN zaehler AS alt ON neu.uid=(alt.uid+4)

WHERE MINUTE(alt.zeitstempel)=0;

SELECT alt.zeitstempel,

ROUND(preise.preis*(neu.bezug-alt.bezug)/(neu.bezug-alt.bezug+neu.erzeugung-alt.erzeugung-neu.einspeisung+alt.einspeisung),4) AS 'price for mixed grid/solar'

FROM zaehler AS neu

JOIN zaehler AS alt ON neu.uid=(alt.uid+4)

LEFT JOIN preise ON DATE_FORMAT(preise.zeitstempel, "%Y%m%d%H%i")=DATE_FORMAT(alt.zeitstempel, "%Y%m%d%H%i")

WHERE MINUTE(alt.zeitstempel)=0

AND preise.zeitstempel>=DATE_SUB(CURRENT_DATE(),INTERVAL 30 DAY);Actual cost, minimal cost, maximal cost, average cost per day

This graph uses only one query:

SELECT DATE(preise.zeitstempel) AS 'date',

MIN(preise.preis)*SUM(verbrauch.energie) AS 'minimal cost',

MAX(preise.preis)*SUM(verbrauch.energie) AS 'maximal cost',

AVG(preise.preis)*SUM(verbrauch.energie) AS 'average cost',

SUM(verbrauch.kosten) AS 'actual cost'

FROM preise JOIN verbrauch ON verbrauch.zeitstempel=preise.zeitstempel

WHERE DATE(preise.zeitstempel)>'2024-03-03'

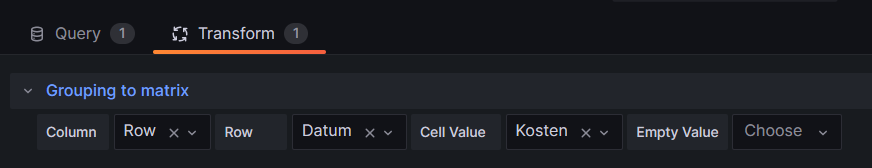

GROUP BY DATE(preise.zeitstempel);Cost and amount of purchased electricity from grid with price indication

The first graph (Cost of purchased electricity from grid with price indication) uses the query:

SELECT DATE_FORMAT(preise.zeitstempel,'%m/%d') AS 'Datum',

ROW_NUMBER() OVER (PARTITION BY DATE(preise.zeitstempel) ORDER BY preise.preis ASC) AS 'Row',

verbrauch.kosten AS 'Kosten'

FROM preise JOIN verbrauch ON verbrauch.zeitstempel=preise.zeitstempel

WHERE DATE(preise.zeitstempel)>DATE_ADD(CURDATE(), INTERVAL -15 DAY)

ORDER BY DATE(preise.zeitstempel) ASC, preise.preis ASC;The second graph (Amount of purchased electricity from grid with price indication) uses the query:

SELECT DATE_FORMAT(preise.zeitstempel,'%m/%d') AS 'Datum',

ROW_NUMBER() OVER (PARTITION BY DATE(preise.zeitstempel) ORDER BY preise.preis ASC) AS 'Row',

verbrauch.energie AS 'Energie'

FROM preise JOIN verbrauch ON verbrauch.zeitstempel=preise.zeitstempel

WHERE DATE(preise.zeitstempel)>DATE_ADD(CURDATE(), INTERVAL -15 DAY)

ORDER BY DATE(preise.zeitstempel) ASC, preise.preis ASC;Hourly price distribution

The red histogram uses the query:

SELECT preis FROM preise WHERE DATE_FORMAT(zeitstempel,'%Y-%m')='${Month}';The blue histogram uses the query:

SELECT preis FROM preise

WHERE DATE_FORMAT(zeitstempel,'%Y-%m')='${Month}'

AND DAYNAME(zeitstempel)='${Weekday}';The yellow histogram uses the query:

SELECT preis FROM preise

WHERE DATE_FORMAT(zeitstempel,'%Y-%m')='${Month}'

AND WEEKDAY(zeitstempel)<5

AND DATE(zeitstempel) NOT IN (SELECT datum FROM aux_feiertage);The green histogram uses the query:

SELECT preis FROM preise

WHERE DATE_FORMAT(zeitstempel,'%Y-%m')='${Month}'

AND WEEKDAY(zeitstempel)>=5

OR DATE(zeitstempel) IN (SELECT datum FROM aux_feiertage WHERE DATE_FORMAT(zeitstempel,'%Y-%m')='${Month}');The tables use these variables on the respective Grafana dasboard as well as the additional helper table aux_feiertage (indicating national holidays) in the MariaDB database.

The variable Month on the Grafana dasboard has the query:

SELECT DISTINCT(DATE_FORMAT(zeitstempel,'%Y-%m')) FROM preise;The variable Weekday on the Grafana dasboard has the query:

SELECT aux_wochentage.wochentag AS Weekday

FROM aux_wochentage JOIN aux_sprachen ON aux_sprachen.id_sprache=aux_wochentage.id_sprache

WHERE aux_sprachen.sprachcode='en'

ORDER BY aux_wochentage.id_tag ASC;The last query also uses the tables aux_wochentage and aux_sprachen in order to offer a multi-lingual interface (not really necessary for the functionality). The tables are:

CREATE TABLE aux_wochentage (id_tag TINYINT UNSIGNED NOT NULL,\

id_sprache TINYINT UNSIGNED NOT NULL,\

wochentag VARCHAR(30) NOT NULL);

CREATE TABLE aux_sprachen (id_sprache TINYINT UNSIGNED NOT NULL PRIMARY KEY,\

sprachcode CHAR(2),\

sprache VARCHAR(30));

CREATE TABLE aux_feiertage (uid SMALLINT UNSIGNED NOT NULL PRIMARY KEY AUTO_INCREMENT,\

datum DATE NOT NULL,\

beschreibung VARCHAR(50));

INSERT INTO aux_sprachen VALUES (0,'en','English'),\

(1,'de','Deutsch'),\

(2,'pt','Português'),\

(3,'zh','中文');

INSERT INTO aux_wochentage VALUES (0,0,'Monday'),\

(1,0,'Tuesday'),\

(2,0,'Wednesday'),\

(3,0,'Thursday'),\

(4,0,'Friday'),\

(5,0,'Saturday'),\

(6,0,'Sunday'),\

(0,1,'Montag'),\

(1,1,'Dienstag'),\

(2,1,'Mittwoch'),\

(3,1,'Donnerstag'),\

(4,1,'Freitag'),\

(5,1,'Samstag'),\

(6,1,'Sonntag'),\

(0,2,'segunda-feira'),\

(1,2,'terça-feira'),\

(2,2,'quarta-feira'),\

(3,2,'quinta-feira'),\

(4,2,'sexta-feira'),\

(5,2,'sábado'),\

(6,2,'domingo'),\

(0,3,'星期一'),\

(1,3,'星期二'),\

(2,3,'星期三'),\

(3,3,'星期四'),\

(4,3,'星期五'),\

(5,3,'星期六'),\

(6,3,'星期日');

INSERT INTO aux_feiertage (datum, beschreibung) VALUES ('2023-01-01','Neujahr'),\

('2023-01-06','Heilige Drei Könige'),\

('2023-04-07','Karfreitag'),\

('2023-04-10','Ostermontag'),\

('2023-05-01','Tag der Arbeit'),\

('2023-05-18','Christi Himmelfahrt'),\

('2023-05-29','Pfingstmontag'),\

('2023-06-08','Fronleichnam'),\

('2023-08-15','Mariä Himmelfahrt'),\

('2023-10-03','Tag der Deutschen Einheit'),\

('2023-11-01','Allerheiligen'),\

('2023-12-25','1. Weihnachtstag'),\

('2023-12-26','2. Weihnachtstag'),\

('2024-01-01','Neujahr'),\

('2024-01-06','Heilige Drei Könige'),\

('2024-03-29','Karfreitag'),\

('2024-04-01','Ostermontag'),\

('2024-05-01','Tag der Arbeit'),\

('2024-05-09','Christi Himmelfahrt'),\

('2024-05-20','Pfingstmontag'),\

('2024-05-30','Fronleichnam'),\

('2024-08-15','Mariä Himmelfahrt'),\

('2024-10-03','Tag der Deutschen Einheit'),\

('2024-11-01','Allerheiligen'),\

('2024-12-25','1. Weihnachtstag'),\

('2024-12-26','2. Weihnachtstag'),\

('2025-01-01','Neujahr'),\

('2025-01-06','Heilige Drei Könige'),\

('2025-04-18','Karfreitag'),\

('2025-04-21','Ostermontag'),\

('2025-05-01','Tag der Arbeit'),\

('2025-05-29','Christi Himmelfahrt'),\

('2025-06-09','Pfingstmontag'),\

('2025-06-19','Fronleichnam'),\

('2025-08-15','Mariä Himmelfahrt'),\

('2025-10-03','Tag der Deutschen Einheit'),\

('2025-11-01','Allerheiligen'),\

('2025-12-25','1. Weihnachtstag'),\

('2025-12-26','2. Weihnachtstag');Monthly hourly price curves

This graph uses only one query:

SELECT preise.zeitstempel AS 'time',

MIN(preise.preis) AS 'Minimum Price',

ROUND(AVG(preise.preis),4) AS 'Average Price',

MAX(preise.preis) AS 'Maximum Price',

ROUND((SUM(verbrauch.kosten)/(SUM(neu.bezug)-SUM(alt.bezug)+SUM(neu.erzeugung)-SUM(alt.erzeugung)-SUM(neu.einspeisung)+SUM(alt.einspeisung))),4) AS 'Achieved (Mixed) Price'

FROM preise

INNER JOIN verbrauch ON preise.zeitstempel=verbrauch.zeitstempel

LEFT JOIN zaehler AS alt ON DATE_FORMAT(alt.zeitstempel,"%Y%m%d%H%i")=DATE_FORMAT(preise.zeitstempel,"%Y%m%d%H%i")

INNER JOIN zaehler AS neu ON neu.uid=(alt.uid+4)

WHERE DATE_FORMAT(preise.zeitstempel,'%Y-%m')='${Month}'

GROUP BY TIME(preise.zeitstempel);It is important to keep in mind that the results from the query show the timespan of the first day of the month (and only the first day) between 00:00 UTC and 23:59 UTC; hence the time window on the Grafana dashboard has to be chosen accordingly (e.g. from 2024-07-01 02:00:00 to 2024-07-02 01:00:00 in the timezone CEST).

Electricity price levels

This graph uses only one query:

SELECT DATE_FORMAT(zeitstempel,'%Y-%m') AS 'Month',

COUNT(CASE WHEN preis<=0.1 THEN preis END) AS 'very cheap (≤ 0.10 €/kWh)',

COUNT(CASE WHEN preis>0.1 AND preis<=0.2 THEN preis END) AS 'cheap (≤ 0.20 €/kWh)',

COUNT(CASE WHEN preis>0.2 AND preis<=0.3 THEN preis END) AS 'normal (≤ 0.30 €/kWh)',

COUNT(CASE WHEN preis>0.3 AND preis<=0.4 THEN preis END) AS 'expensive (≤ 0.40 €/kWh)',

COUNT(CASE WHEN preis>0.4 THEN preis END) AS 'very expensive (> 0.40 €/kWh)'

FROM preise

GROUP BY Month;Self-sufficiency (Autarkie) and sef-consumption (Eigenverbrauch)

This graph uses only one query:

SELECT DATE_FORMAT(alt.zeitstempel,'%m/%d') AS 'Datum',

ROUND((neu.erzeugung-alt.erzeugung-neu.einspeisung+alt.einspeisung)*100/(neu.bezug-alt.bezug+neu.erzeugung-alt.erzeugung-neu.einspeisung+alt.einspeisung)) AS 'Autarkiegrad',

ROUND((neu.erzeugung-alt.erzeugung-neu.einspeisung+alt.einspeisung)*100/(neu.erzeugung-alt.erzeugung)) AS 'Eigenverbrauchsquote'

FROM zaehler AS neu

JOIN zaehler AS alt ON neu.uid=(alt.uid+96)

WHERE HOUR(alt.zeitstempel)=0

AND MINUTE(alt.zeitstempel)=0

AND alt.zeitstempel>=DATE_SUB(CURRENT_DATE(),INTERVAL 14 DAY);

Files

The following dataset was used for the graphs:

Sources

- [1] = Tibber Developer

- [2] = Download Grafana | Grafana Labs

- [3] = Node-RED

- [4] = Tibber Developer: Communicating with the API

- [5] = Smarthome: AVM-Steckdosen per Skript auslesen

- [6] = Grafana Visualizations (Part 2)

- [7] = Strom: heute Extrempreise für Kunden im EPEX SPOT – ISPEX

- [8] = Dynamischer Stromtarif – Warum fallen ALLE darauf rein?

Disclaimer

- Program codes and examples are for demonstration purposes only.

- Program codes are not recommended be used in production environments without further enhancements in terms of speed, failure-tolerance or cyber-security.

- While program codes have been tested, they might still contain errors.

- I am neither affiliated nor linked to companies named in this blog post.

Grafana Visualizations (Part 2)

Executive Summary

In this article, we use Grafana in order to examine real-world data of electricity consumption stored in a MariaDB database. As dynamic pricing (day-ahead market) is used, we also try to investigate how well I have fared so far with dynamic pricing.

Background

On 1st of March 2024, I switched from a traditional electricity provider to one with dynamic day-ahead pricing, in my case, Tibber. I wanted to try this contractual model and see if I could successfully manage to shift chunks of high electricity consumption such as:

- … loading the battery-electric vehicle (BEV) or the plug-in hybrid car (PHEV)

- … washing clothes

- … drying clothes in the electric dryer

to those times of the day when the electricity price is lower. I also wanted to see if that makes economic sense for me. And, after all, it is fun to play around with data and gain new insights.

As my electricity supplier, I had chosen Tibber because they were the first one I got to know and they offer a device called Pulse which can connect a digital electricity meter to their infrastructure for metering and billing purposes. Furthermore, they do have an API [1] which allows me to read out my own data; that was very important for me. I understand that meanwhile, there are several providers Tibber that have similar models and comparable features.